Summary

Last week, on the day forecast to have record temperatures in the UK, the Environmental Audit Committee warns of 7,000 heat-related deaths every year in the UK by the 2050s if the Government did not act quickly. That prediction was based upon Hajat S, et al 2014. Two principle assumptions behind that prognosis did not hold at the date when the paper was submitted. First is that any trend of increasing summer heatwaves in the data period of 1993 to 2006 had by 2012 ended. The six following summers were distinctly mild, dull and wet. Second, based upon estimates from the extreme 2003 heatwave, is that most of the projected heat deaths would occur in NHS hospitals, is the assumption that health professionals in the hospitals would not only ignore the increasing death toll, but fail to take adaptive measures to an observed trend of evermore frequent summer heatwaves. Instead, it would require a central committee to co-ordinate the data gathering and provide the analysis. Without the politicians and bureaucrats producing reports and making recommendations the world will collapse.

There is a third, implied assumption, in the projection. The 7,000 heat-related deaths in the 2050s assumes the complete failure of the Paris Agreement to control greenhouse emissions, let alone keep warming to within any arbitrary 1.5°C or 2°C. That means other countries have failed to follow Britain’s lead in reducing their emissions by 80% by 2050. The implied assumption is that the considerable costs and hardships on imposed on the British people by the Climate Change Act 2008 will have been for nothing.

Announcement on the BBC

In the early morning of last Thursday – a day when there were forecasts of possible record temperatures – the BBC published a piece by Roger Harrabin “Regular heatwaves ‘will kill thousands’”, which began

The current heatwave could become the new normal for UK summers by 2040 because of climate change, MPs say.

The Environmental Audit Committee warns of 7,000 heat-related deaths every year in the UK by 2050 if the government doesn’t act quickly.

Higher temperatures put some people at increased risk of dying from cardiac, kidney and respiratory diseases.

The MPs say ministers must act to protect people – especially with an ageing population in the UK.

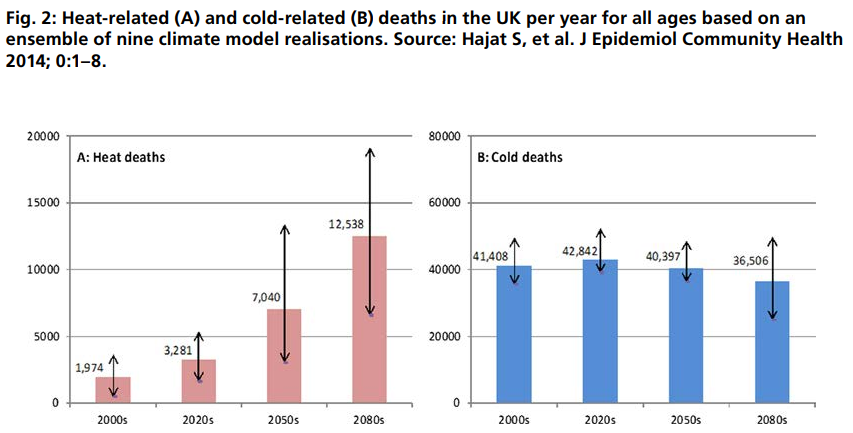

I have left the link in. It is not to a Report by the EAC but to a 2014 paper mentioned once in the report. The paper is Hajat S, et al. J Epidemiol Community Health DOI: 10.1136/jech-2013-202449 “Climate change effects on human health: projections of temperature-related mortality for the UK during the 2020s, 2050s and 2080s”.

Hajat et al 2014

Unusually for a scientific paper, Hajat et al 2014 contains very clear highlighted conclusions.

What is already known on this subject

▸ Many countries worldwide experience appreciable burdens of heat-related and cold-related deaths associated with current weather patterns.

▸ Climate change will quite likely alter such risks, but details as to how remain unclear.

What this study adds

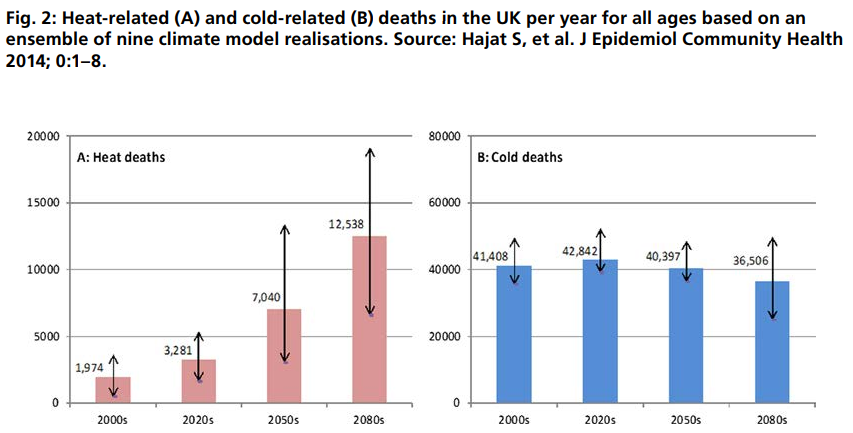

▸ Without adaptation, heat-related deaths would be expected to rise by around 257% by the 2050s from a current annual baseline of around 2000 deaths, and cold-related mortality would decline by 2% from a baseline of around 41 000 deaths.

▸ The increase in future temperature-related deaths is partly driven by expected population growth and ageing.

▸ The health protection of the elderly will be vital in determining future temperature-related health burdens.

There are two things of note. First the current situation is viewed as static. Second, four decades from now heat-related deaths will dramatically increase without adaptation.

With Harrabin’s article there is no link to the Environmental Audit Committee’s report page, direct to the full report, or to the announcement, or even to its homepage.

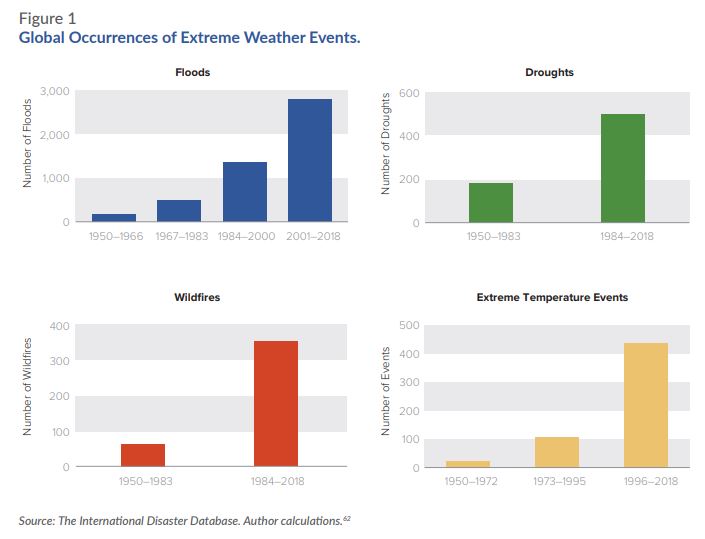

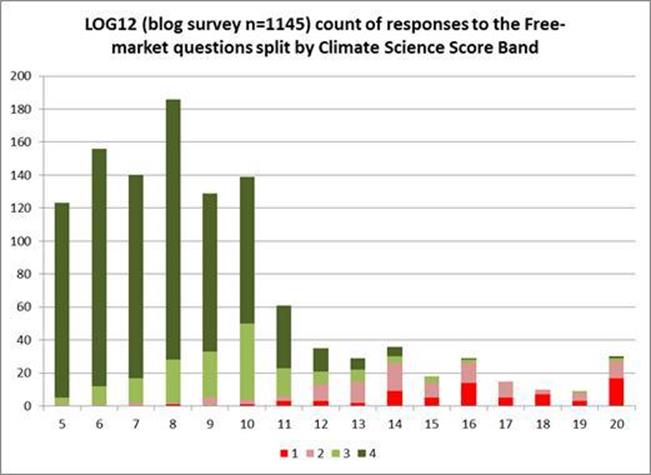

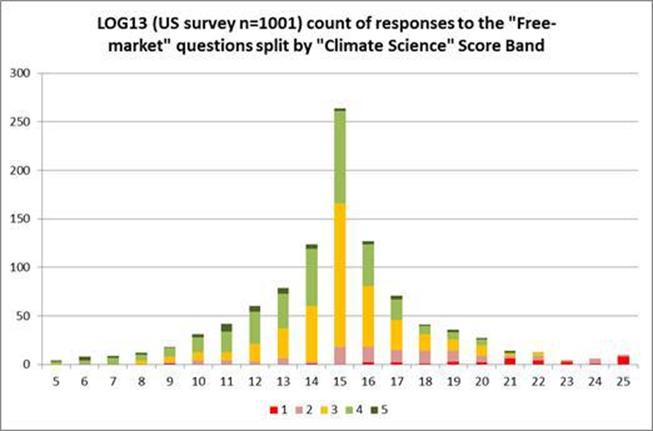

The key graphic in the EAC report relating to heat deaths reproduces figure 3 in the Hajat paper.

The message being put out is that, given certain assumptions, deaths from heatwaves will increase dramatically due to climate change, but cold deaths will only decline very slightly by the 2050s.

The message from the graphs is if the central projections are true (note the arrows for error bars) in the 2050s cold deaths will still be more than five times the heat deaths. If the desire is to minimize all temperature-related deaths, then even in the 2050s the greater emphasis still ought to be on cold deaths.

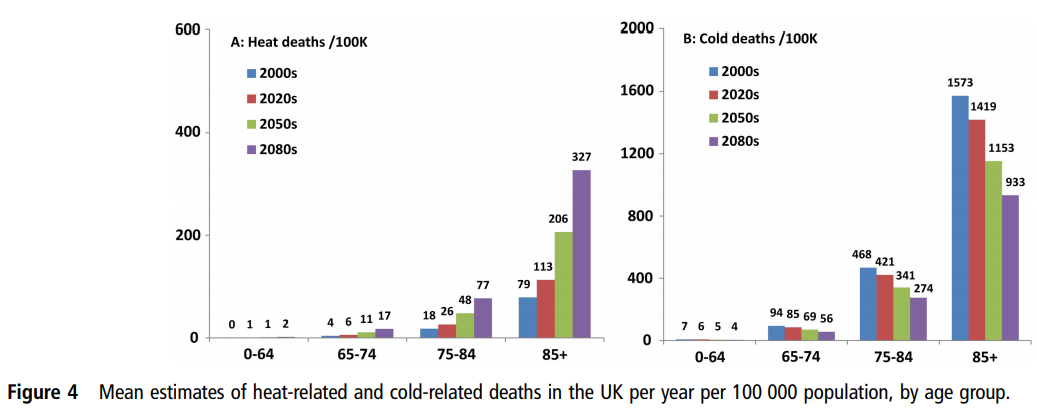

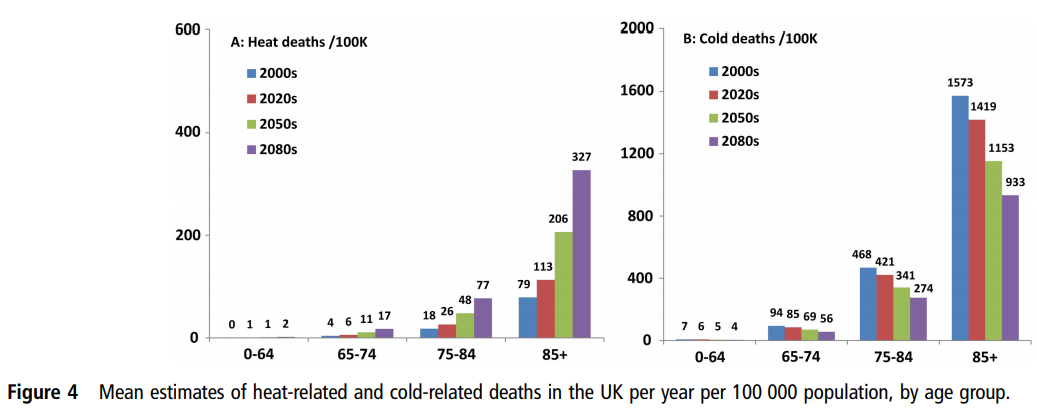

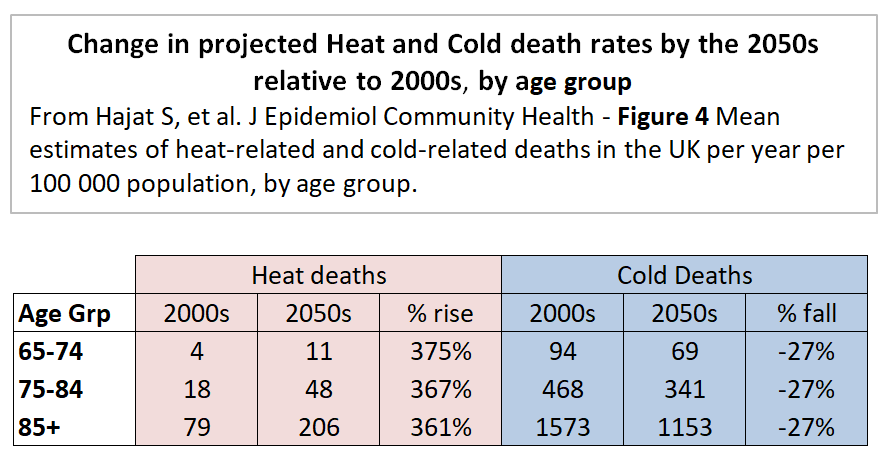

The companion figure 4 of the Hajat et al 2014 should also be viewed.

Figure 4 shows that both heat and cold deaths is almost entirely an issue with the elderly, particularly with the 85+ age group.

Hajat et al 2014 looks at regional data for England and Wales. There is something worthy of note in the text to Figure 1(A).

Region-specific and national-level relative risk (95% CI) of mortality due to hot weather. Daily mean temperature 93rd centiles: North East (16.6°C), North West (17.3°C), Yorks & Hum (17.5°C), East Midlands (17.8°C), West Midlands (17.7°C), East England (18.5°C), London (19.6°C), South East (18.3°C), South West (17.6°C), Wales (17.2°C).

The coldest region, the North East, has mean temperatures a full 3°C lower than London, the warmest region. Even with high climate sensitivities, the coldest region (North East) is unlikely to see temperature rises of 3°C in 50 years to make mean temperature as high as London today. Similarly, London will not be as hot as Milan. there would be an outcry if the London had more than three times the heat deaths of Newcastle, or if Milan had had more than three times the heat deaths of London. So how does Hajat et al 2014 reach these extreme conclusions?

There are as number of assumptions that are made, both explicit and implicit.

Assumption 1 : Population Increase

(T)otal UK population is projected to increase from 60 million in mid-2000s to 89 million by mid-2080s

By the 2050s there is roughly a 30% increase in population. Heat death rates per capita only show a 150% increase in five decades.

Assumption 2 : Lack of improvement in elderly vulnerability

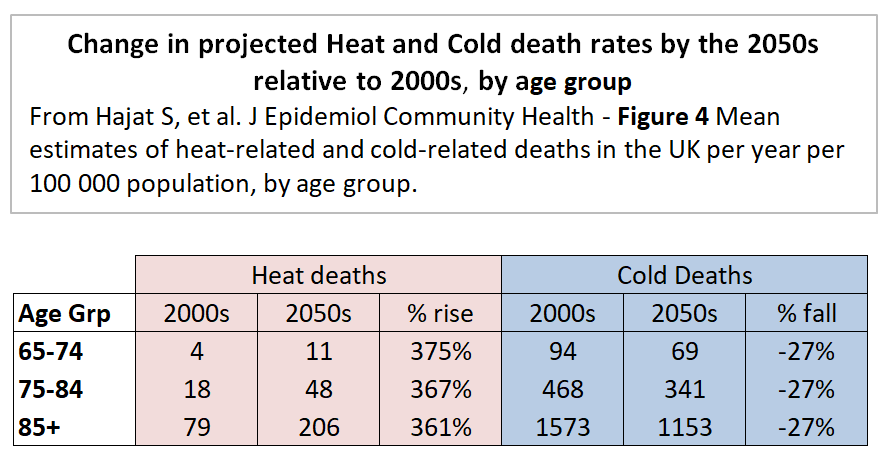

Taking the Hajat et al figure 4, the relative proportions hot and cold deaths between age bands is not assumed to change, as my little table below shows.

The same percentage changes for all three age bands I find surprising. As the population ages, I would expect the 65-74 and 74-84 age bands to become relatively healthier, continuing the trends of the last few decades. That will make them less vulnerable to temperature extremes.

Assumption 3 : Climate Sensitivities

A subset of nine regional climate model variants corresponding to climate sensitivity in the range of 2.6–4.9°C was used.

The compares to the IPCC AR5 WG1 SPM Page 16

Equilibrium climate sensitivity is likely in the range 1.5°C to 4.5°C (high confidence)

With a mid-point of 3.75°C compared to the IPCC’s 3°C does not make much difference over 50 years. The IPCC’s RCP8.5 unmitigated emissions growth scenario has 3.7°C (4.5-0.8) of warming from 2010 to 2100. Pro-rata the higher sensitivities give about 2.5°C of warming by the 2050s, still making mean temperatures in the North East just below that of London today.

The IPCC WG1 report was published a few months after the Hajat paper was accepted for publication. However, the ECS range 1.5−4.5 was unchanged from the 1979 Charney report, so there should be a least a footnote justifying the higher senitivitity. An alternative approach to these vague estimates derived from climate models is those derived from changes over the historical instrumental data record using energy budget models. The latest – Lewis and Curry 2018 – gives an estimate of 1.5°C. This finding from the latest research would more than halved any predicted warming to the 2050s of the Hajat paper’s central ECS estimate.

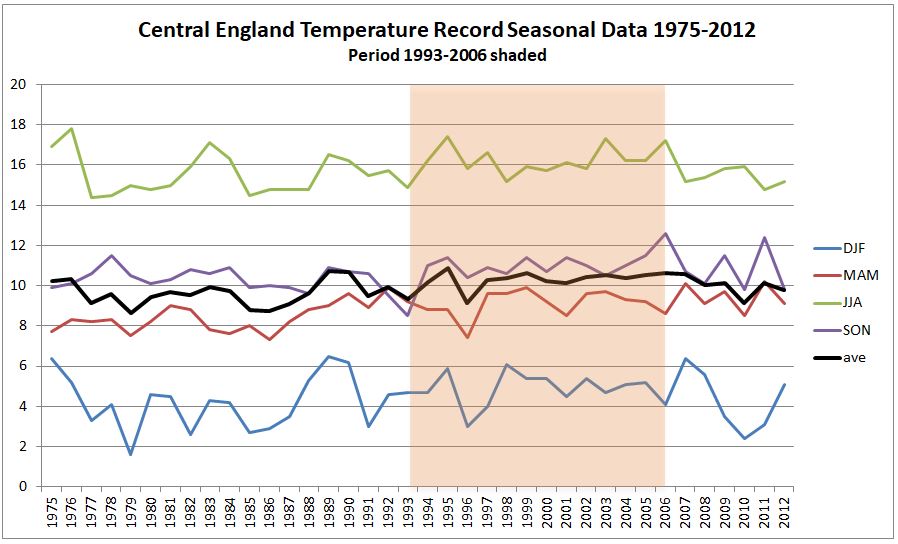

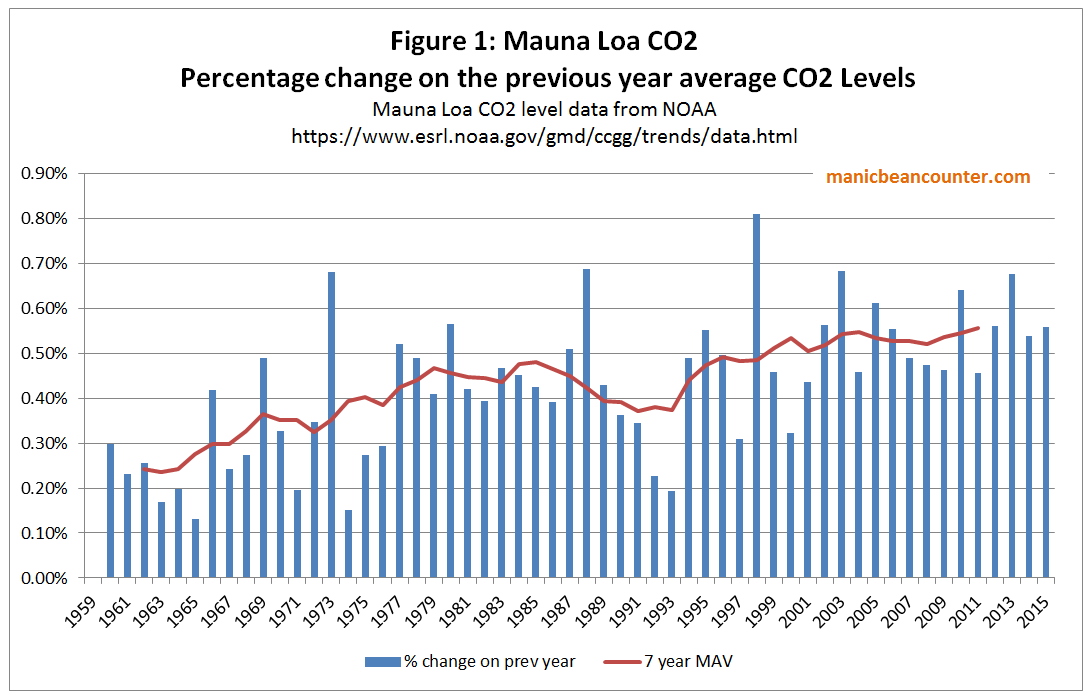

Assumption 4 : Short period of temperature data

The paper examined both regional temperature data and deaths for the period 1993–2006. This 14 period had significant heatwaves in 1995, 2003 and 2006. Climatically this is a very short period, ending a full six years before the paper was submitted.

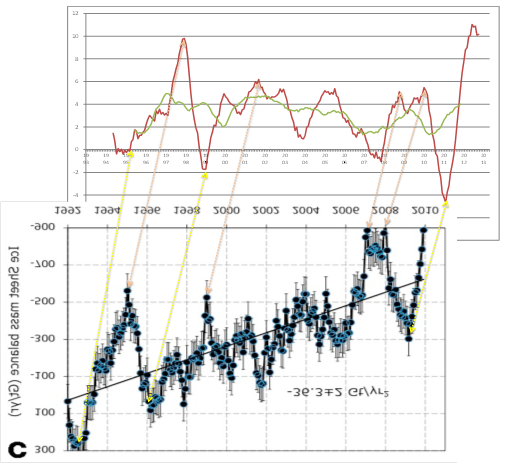

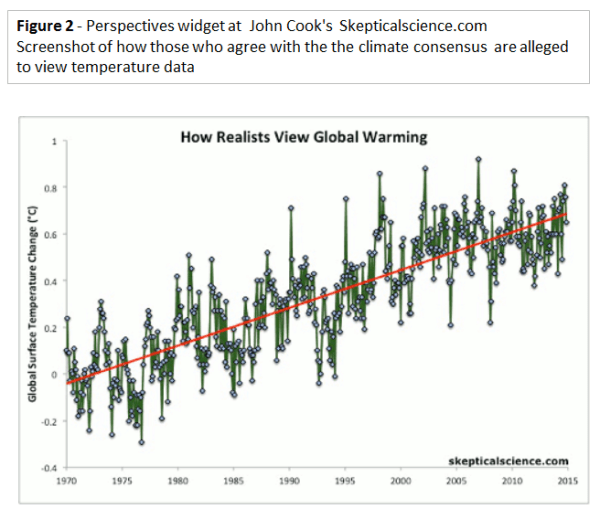

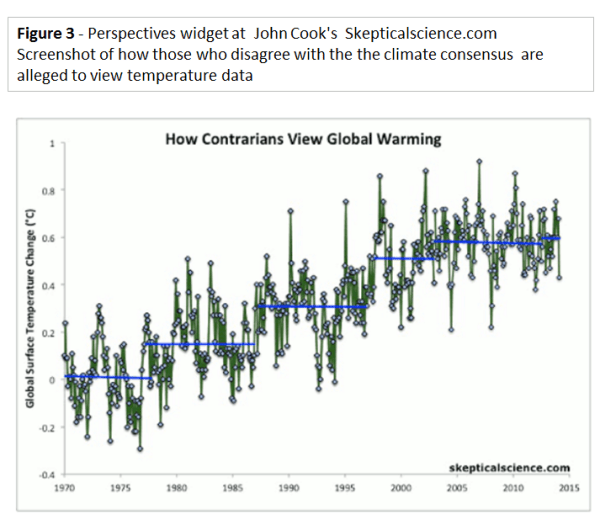

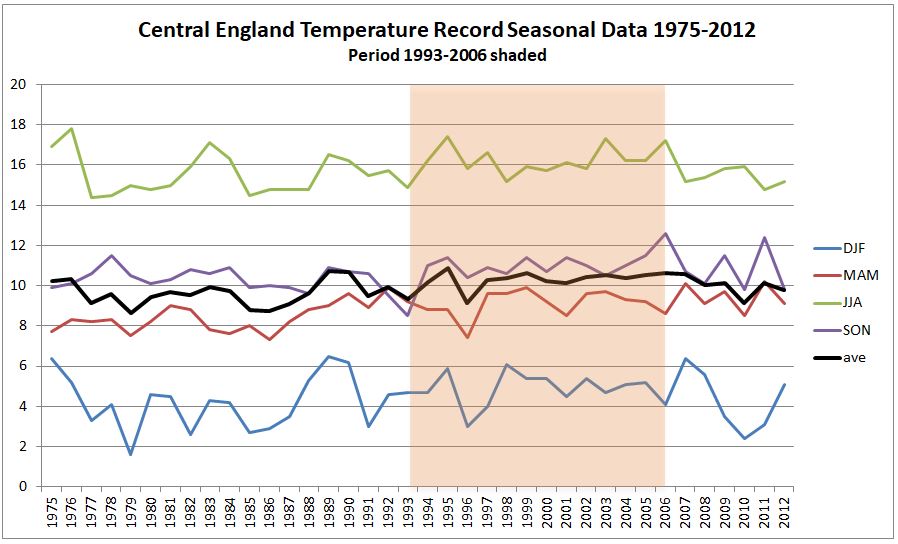

From the Met Office Hadley Centre Central England Temperature Data I have produced the following graphic of seasonal data for 1975-2012, with 1993-2006 shaded.

Typical mean summer temperatures (JJA) were generally warmer than in both the period before and the six years after. Winter (DJF) average temperatures for 2009 to 2011 were the coldest three run of winters in the whole period. Is this significant?

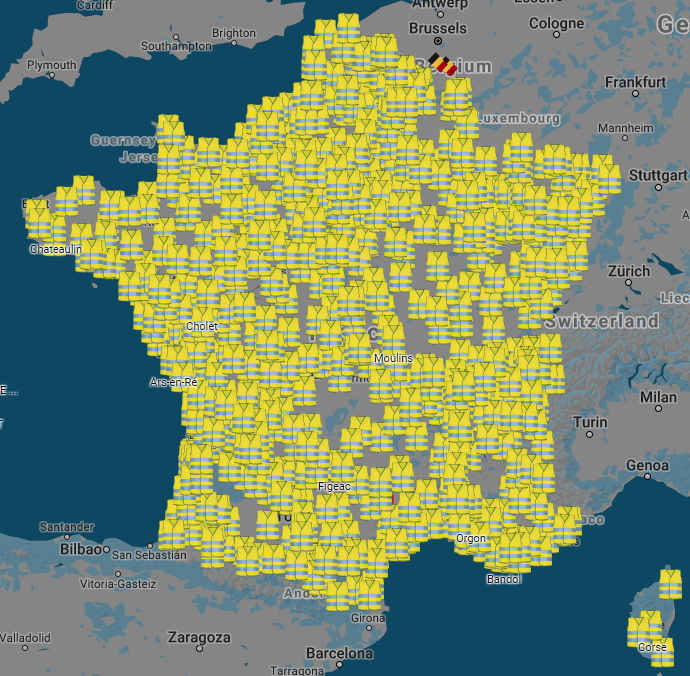

A couple of weeks ago the GWPF drew attention to a 2012 Guardian article The shape of British summers to come?

It’s been a dull, damp few months and some scientists think we need to get used to it. Melting ice in Greenland could be bringing permanent changes to our climate

The news could be disconcerting for fans of the British summer. Because when it comes to global warming, we can forget the jolly predictions of Jeremy Clarkson and his ilk of a Mediterranean climate in which we lounge among the olive groves of Yorkshire sipping a fine Scottish champagne. The truth is likely to be much duller, and much nastier – and we have already had a taste of it. “We will see lots more floods, droughts, such as we’ve had this year in the UK,” says Peter Stott, leader of the climate change monitoring and attribution team at the Met Office. “Climate change is not a nice slow progression where the global climate warms by a few degrees. It means a much greater variability, far more extremes of weather.”

Six years of data after the end of the data period, but five months before the paper was submitted on 31/01/2013 and nine months before the revised draft was submitted, there was a completely new projection saying the opposite of more extreme heatwaves.

The inclusion more recent available temperature data is likely to have materially impacted on the modelled extreme hot and cold death temperature projections for many decades in the future.

Assumption 5 : Lack of Adaptation

The heat and cold death projections are “without adaptation”. This assumption means that over the decades people do not learn from experience, buy air conditioners, drink water and look out for the increasing vulnerable. People basically ignore the rise in temperatures, so by the 2050s treat a heatwave of 35°C exactly the same as one of 30°C today. To put this into context, it is worth looking as another papers used in the EAC Report.

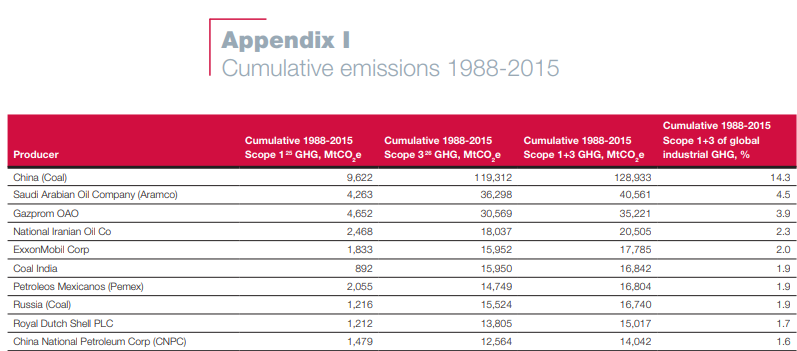

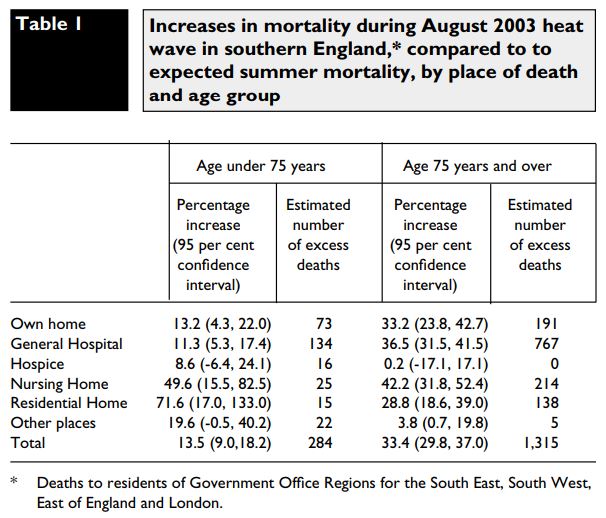

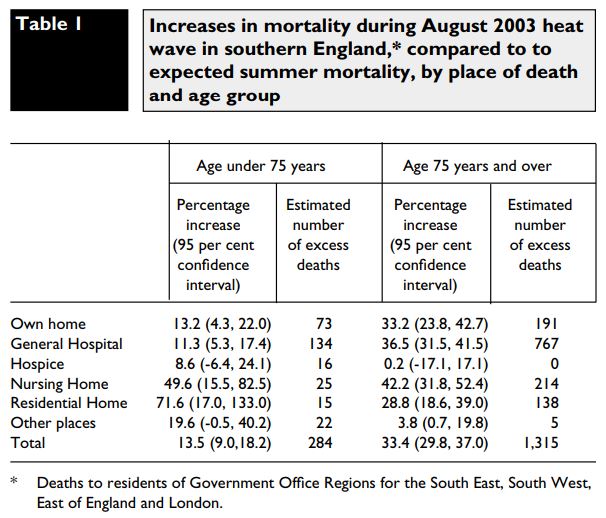

Mortality in southern England during the 2003 heat wave by place of death – Kovats et al – Health Statistics Quarterly Spring 2006

The only table is reproduced below.

Over half the total deaths were in General Hospitals. What does this “lack of adaptation” assumption imply about the care given by health professionals to vulnerable people in their care? Surely, seeing rising death tolls they would be taking action? Or do they need a political committee in Westminster looking at data well after the event to point out what is happening under there very noses? Even when data been collated and analysed in such publications as the Government-run Health Statistics Quarterly? The assumption of no adaptation should have been alongside and assumption “adaptation after the event and full report” with new extremes of temperature coming as a complete surprise. However, that might still be unrealistic considering “cold deaths” are a current problem.

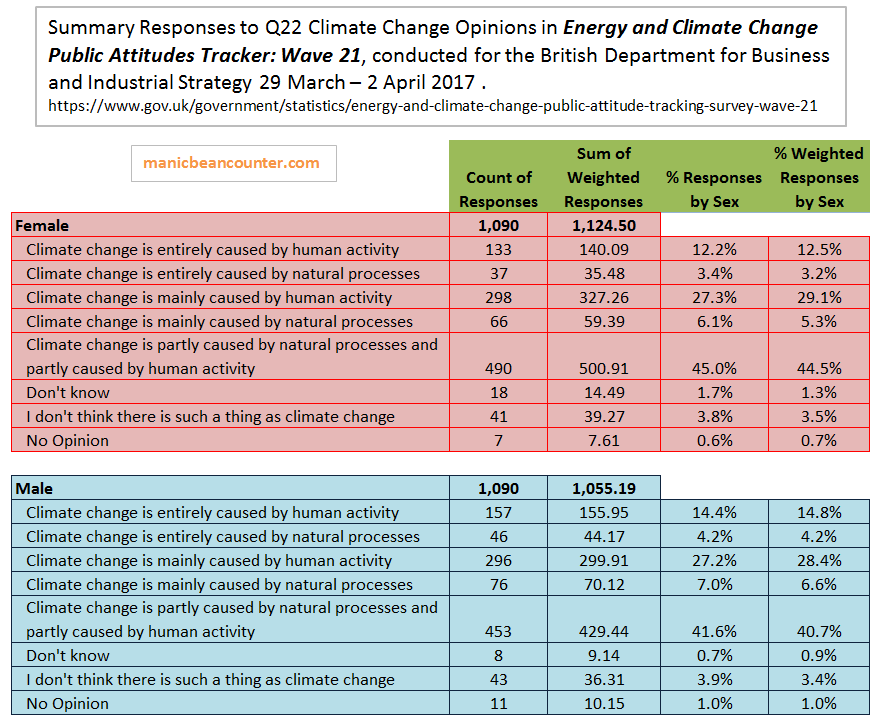

Assumption 6 : Complete failure of Policy

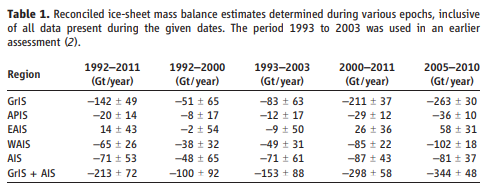

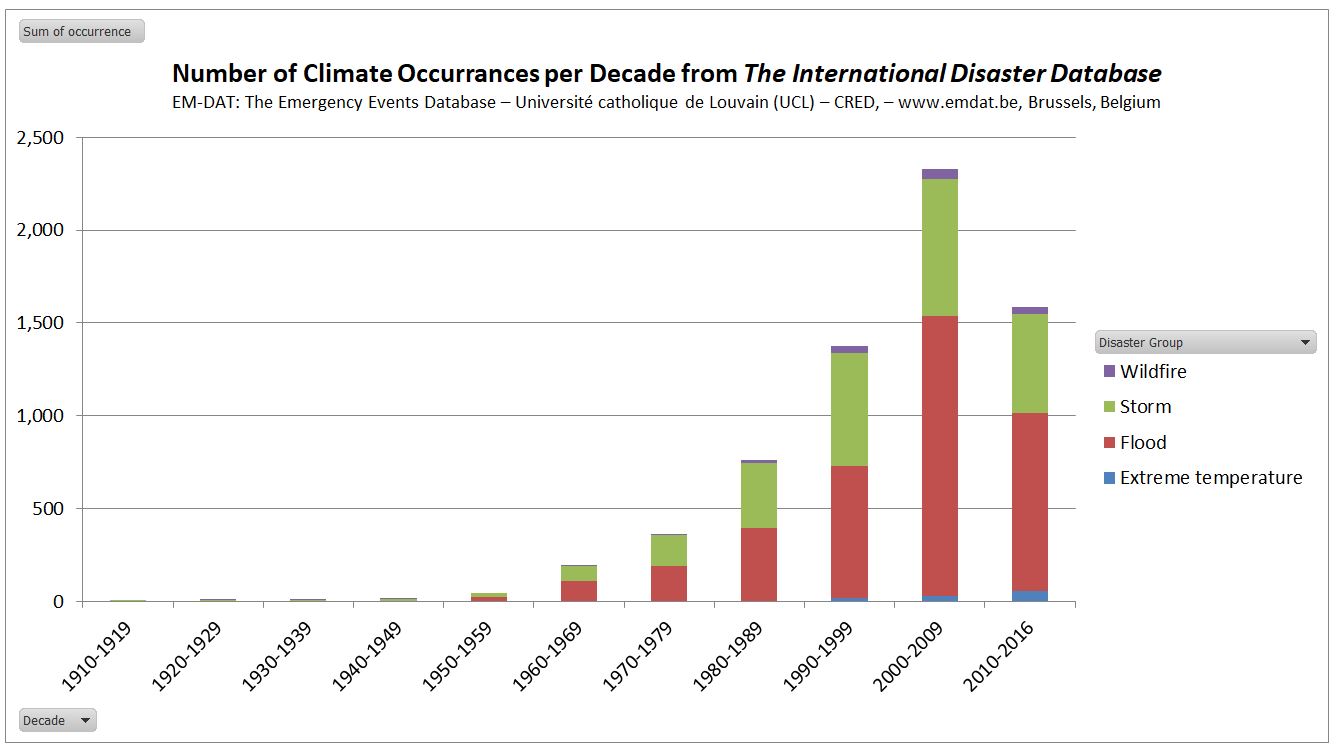

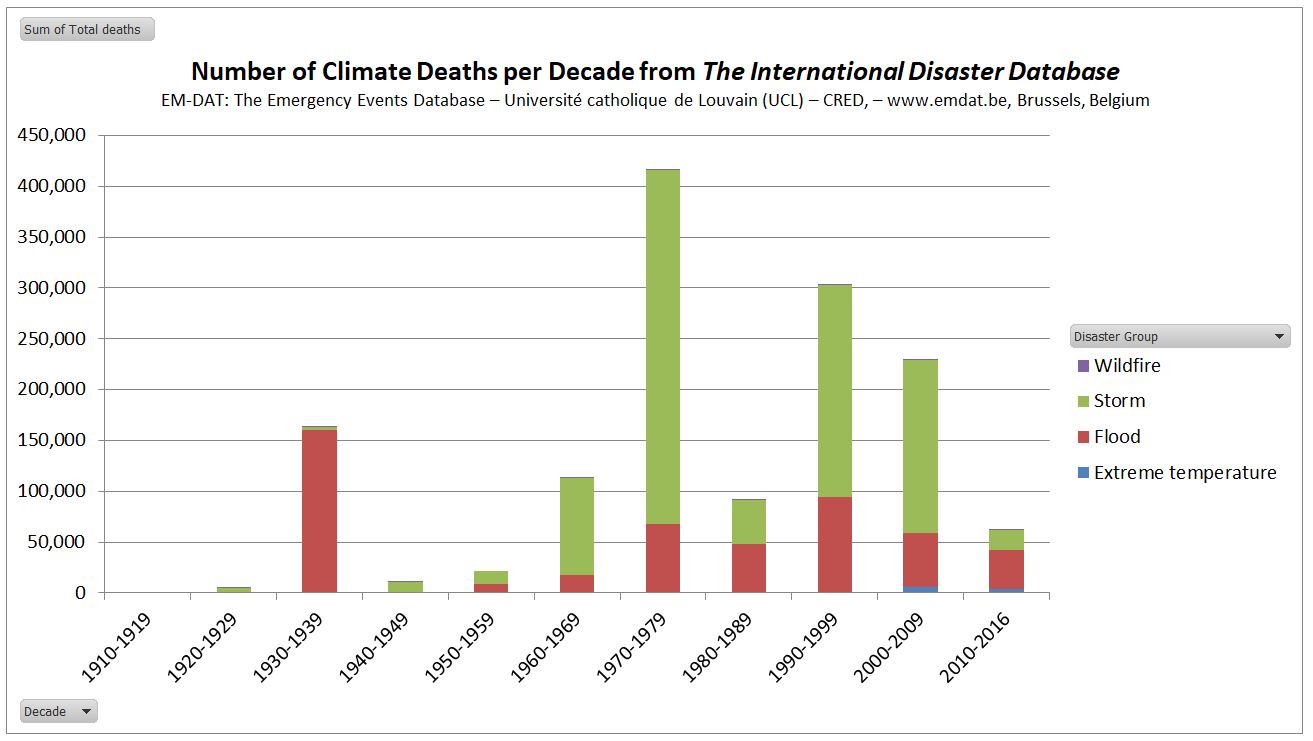

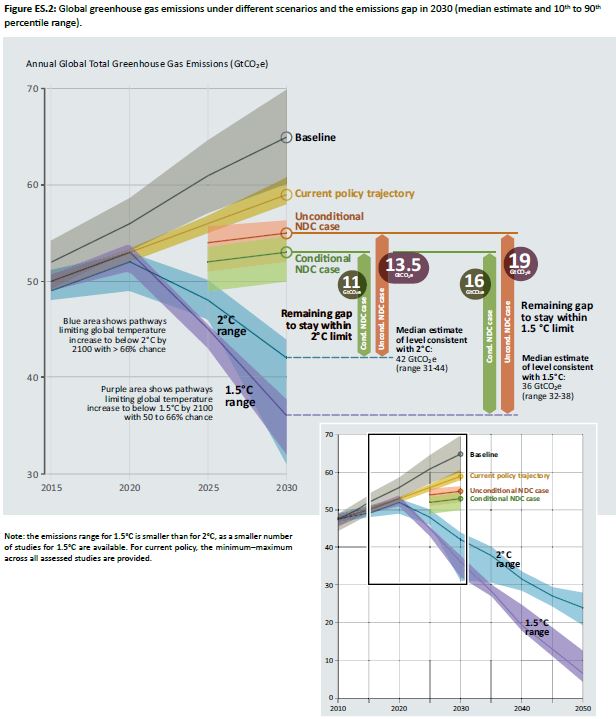

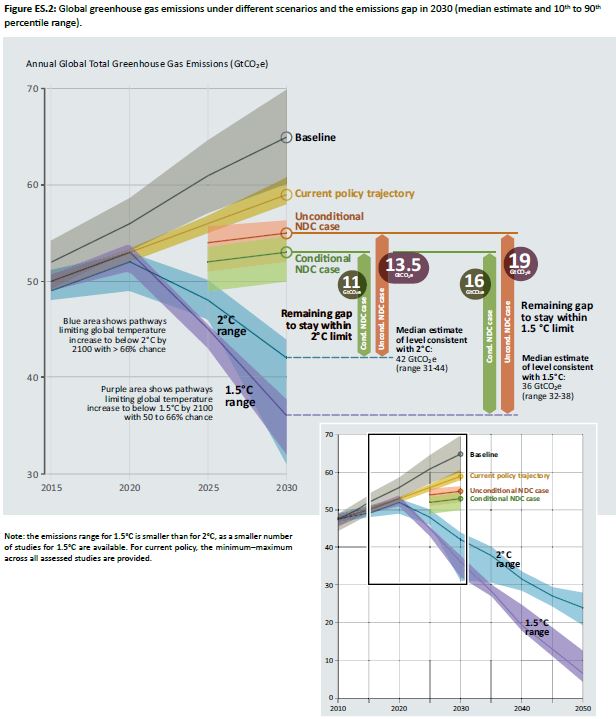

The assumption high climate sensitivities resulting in large actual rises in global average temperatures in the 2050s and 2080s implies another assumption with political implications. The projection of 7,000 heat-related deaths assumes the complete failure of the Paris Agreement to control greenhouse emissions, let alone keep warming to within any arbitrary 1.5°C or 2°C. The Hajat paper may not state this assumption, but by assuming increasing temperatures from rising greenhouse levels, it is implied that no effective global climate mitigation policies have been implmented. This is a fair assumption. The UNEP emissions Gap Report 2017 (pdf), published in October last year is the latest attempt to estimate the scale of the policy issue. The key is the diagram reproduced below.

The aggregate impact of climate mitigation policy proposals (as interpreted by the promoters of such policies) is much closer to the non-policy baseline than the 1.5°C or 2°C emissions pathways. That means other countries have failed to follow Britain’s lead in reducing their emissions by 80% by 2050. In its headline “Heat-related deaths set to treble by 2050 unless Govt acts” the Environmental Audit Committee are implicitly accepting that the Paris Agreement will be a complete flop. That the considerable costs and hardships on imposed on the British people by the Climate Change Act 2008 will have been for nothing.

Concluding comments

Projections about the consequences of rising temperatures require making restrictive assumptions to achieve a result. In academic papers, some of these assumptions are explicitly-stated, others not. The assumptions are required to limit the “what-if” scenarios that are played out. The expected utility of modeled projections is related to whether the restrictive assumptions bear relation to actual reality and empirically-verified theory. The projection of over 7,000 heat deaths in the 2050s is based upon

(1) Population growth of 30% by the 2050s

(2) An aging population not getting healthier at any particular age

(3) Climate sensitivities higher than the consensus, and much higher than the latest data-based research findings

(4) A short period of temperature data with trends not found in the next few years of available data

(5) Complete lack of adaptation over decades – an implied insult to health professionals and carers

(6) Failure of climate mitigation policies to control the growth in temperatures.

Assumptions (2) to (5) are unrealistic, and making any more realistic would significantly reduce the projected number of heat deaths in the 2050s. The assumption of lack of adaptation is an implied insult to many health professionals who monitor and adapt to changing conditions. In assuming a lack of climate mitigation policies implies that the £319bn Britain is projected is spent on combating climate change between 2014 and 2030 is a waste of money. Based on available data, this assumption is realistic.

Kevin Marshall