Global warming alarmism first emerged in the late 1980s, three decades ago. Put very simply, the claim is that climate change, resulting from human-caused increases in trace gases, is a BIG potential problem. The BIG solution is to control reduce global greenhouse gas emissions through a co-ordinated global action. The actual evidence shows a curious symmetry. The proponents of alarmism have failed to show that rises in greenhouse gas levels are making non-trivial difference on a global scale, and the aggregate impact of the policy proposals on global emissions, if fully implemented, will make a trivial difference to global emissions pathways. The Adoption of the Paris Agreement communique paragraph 17 clearly states the failure. My previous post puts forward reasons why the impact of mitigation policies will remain trivial.

In terms of an emerging large problem, the easiest to visualize, and the most direct impact from rising average temperatures is rising sea levels. Rising temperatures will lead to sea level rise principally through meltwater from the polar ice-caps and thermal expansion of the oceans. Given that sea levels have been rising since the last ice age, if a BIG climate problem is emerging then it should be detectable in accelerating sea level rise. If the alarmism is credible, then after 30 years of failure to implement the BIG solution, the unrelenting increases in global emissions and the accelerating rise in CO2 levels for decades, then there should be a clear response in terms of acceleration in the rate of sea level rise.

There is a strong debate as to whether sea-level rise is accelerating or not. Dr. Roy Spencer at WUWT makes a case for there being mild acceleration since about 1950. Based on the graph below (from Church and White 2013) he concludes:-

The bottom line is that, even if (1) we assume the Church & White tide gauge data are correct, and (2) 100% of the recent acceleration is due to humans, it leads to only 0.3 inches per decade that is our fault, a total of 2 inches since 1950.

As Judith Curry mentioned in her continuing series of posts on sea level rise, we should heed the words of the famous oceanographer, Carl Wunsch, who said,

“At best, the determination and attribution of global-mean sea-level change lies at the very edge of knowledge and technology. Both systematic and random errors are of concern, the former particularly, because of the changes in technology and sampling methods over the many decades, the latter from the very great spatial and temporal variability. It remains possible that the database is insufficient to compute mean sea-level trends with the accuracy necessary to discuss the impact of global warming, as disappointing as this conclusion may be.”

In metric, the so-called human element of 2 inches since 1950 is 5 centimetres. The total in over 60 years is less than 15 centimetres. The time period for improving sea defences to cope with this is way beyond normal human planning horizons. Go to any coastal strip with sea defences, such as the dykes protecting much of the Netherlands, with a measure and imagine increasing those defences by 15 centimetres.

However, a far more thorough piece is from Dave Burton (of Sealevel.info) in three comments. Below is his a repost of his comments.

Agreed. On Twitter, or when sloppy and in a hurry, I say “no acceleration.” That’s shorthand for, “There’s been no significant, sustained acceleration in the rate of sea-level rise, over the last nine or more decades, detectable in the measurement data from any of the longest, highest-quality, coastal sea-level records.” Which is right.

That is true at every site with a very long, high-quality measurement record. If you do a quadratic regression over the MSL data, depending on the exact date interval you analyze, you may find either a slight acceleration or deceleration, but unless you choose a starting date prior to the late 1920s, you’ll find no practically-significant difference from perfect linearity. In fact, for the great majority of cases, the acceleration or deceleration doesn’t even manage statistical significance.

What do I mean by “practically-significant,” you might wonder? I mean that, if the acceleration or deceleration continued for a century, it wouldn’t affect sea-level by more than a few inches. That means it’s likely dwarfed by common coastal processes like vertical land motion, sedimentation, and erosion, so it is of no practical significance.

For instance, here’s one of the very best Pacific tide gauges. It is at a nearly ideal location (mid-ocean, which minimizes ENSO effects), on a very tectonically stable island, with very little vertical land motion, and a very trustworthy, 100% continuous, >113-year measurement record (1905/1 through 2018/3):

As you can see, there have been many five-year to ten-year “sloshes-up” and “sloshes-down,” but there’s been no sustained acceleration, and no apparent effect from rising CO2 levels.

The linear trend is +1.482 ±0.212 mm/year (which is perfectly typical).

Quadratic regression calculates an acceleration of -0.00539 ±0.01450 mm/yr².

The minus sign means deceleration, but it is nowhere near statistically significant.

To calculate the effect of a century of sustained acceleration on sea-level, you divide the acceleration by two, and multiply it by the number of years squared, 100² = 10,000. In this case, -0.00539/2 × 10,000 = -27 mm (about one inch).

That illustrates a rule-of-thumb that’s worth memorizing: if you see claimed sea-level acceleration or deceleration numbers on the order of 0.01 mm/yr² or less, you can stop calculating and immediately pronounce it practically insignificant, regardless of whether it is statistically significant.

However, the calculation above actually understates the effect of projecting the quadratic curve out another 100 years, compared to a linear projection, because the starting rate of SLR is wrong. On the quadratic curve, the point of “average” (linear) trend is the midpoint, not the endpoint. So to see the difference at 100 years out, between the linear and quadratic projections, we should calculate from that mid-date, rather than the current date. In this case, that adds 56.6 years, so we should multiply half the acceleration by 156.6² = 24,524.

-0.00539/2 × 24,524 = -66 mm = -2.6 inches (still of no practical significance).

Church & White have been down this “acceleration” road before. Twelve years ago they published the most famous sea-level paper of all, A 20th Century Acceleration in Global Sea-Level Rise, known everywhere as “Church & White (2006).”

It was the first study anywhere which claimed to have detected an acceleration in sea-level rise over the 20th century. Midway through the paper they finally tell us what that 20th century acceleration was:

“For the 20th century alone, the acceleration is smaller at 0.008 ± 0.008 mm/yr² (95%).”

(The paper failed to mention that all of the “20th century acceleration” which their quadratic regression detected had actually occurred prior to the 1930s, but never mind that.)

So, applying the rule-of-thumb above, the first thing you should notice is that 0.008 mm/yr² of acceleration, even if correct, is practically insignificant. It is so tiny that it just plain doesn’t matter.

In 2009 they posted on their web site a new set of averaged sea-level data, from a different set of tide gauges. But they published no paper about it, and I wondered why not. So I duplicated their 2006 paper’s analysis, using their new data, and not only did it, too, show slight deceleration after 1925, all the 20th century acceleration had gone away, too. Even for the full 20th century their data showed a slight (statistically insignificant) deceleration.

My guess is that the reason they wrote no paper about it was that the title would have had to have been something like this:

Church and White (2009), Never mind: no 20th century acceleration in global sea-level rise, after all.

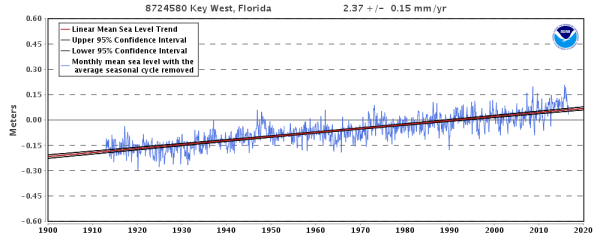

There is no real disagreement between the too accounts. Roy Spencer is saying that if the Church and White paper is correct there is trivial acceleration, Dave Burton is making a more general point about there being no statistically significant acceleration or deceleration in any data set. At Key West in low-lying Florida, the pattern of near constant of sea level rise over the past century is similar to Honolulu. The rate of rise is about 50% more at 9 inches per century but more in line with the long-term global average from tide gauges. Given that Hawaii is a growing volcanic island, this should not come as a surprise.

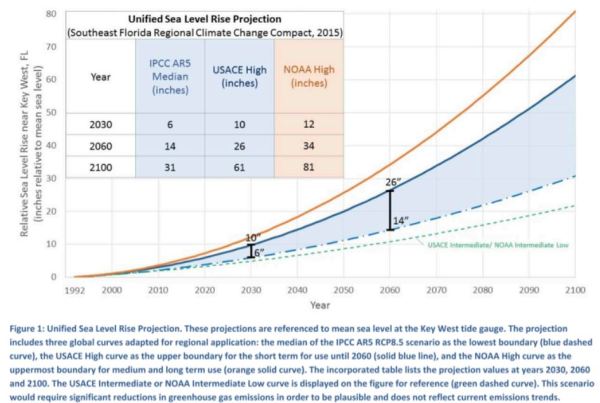

I choose Key West from Florida, as supposedly from projecting from this real data, and climate models, the Miami-Dade Sea Level Rise Task Force produced the following Unified Sea Level Rise Projection.

The projections of significant acceleration in the rate of sea level rise are at odds with the historical data, but should be discernible as the projection includes over two decades of actual data. Further, as the IPCC AR5 RCP8.5 scenario is the projection without climate mitigation policy, the implied assumption for this report for adapting to a type of climate change is that climate mitigation policies will be completely useless. As this graphic is central to the report, it would appear it is the usage of the most biased projections that appears to be influencing public policy. Basic validation of theory against modelled trends in the peer-reviewed literature (Dr Roy Spencer) or against actual measured data (Dave Burton) appears to be rejected in favour of beliefs in the mainstream climate consensus.

The curious symmetry of climate alarmism between evidence for BIG potential climate problem and the lack of an agreed BIG mitigation policy solution is evident is sea level rise projections. Unfortunately, given that policy is based on the ridiculous projections, it is people outside of the consensus that will suffer. Expensive and unnecessary flood defences will be built and low-lying areas will be blighted by alarmist reports.

Kevin Marshall