Earlier this week Nature Plants published a new paper Decreases in global beer supply due to extreme drought and heat.

The Scientific American has an article “Trouble Brewing? Climate Change Closes In on Beer Drinkers” with the sub-title “Increasing droughts and heat waves could have a devastating effect on barley stocks—and beer prices”. The Daily Mail headlines with “Worst news ever! Australian beer prices are set to DOUBLE because of global warming“. All those climate deniers in Australia have denied future generations the ability to down a few cold beers with their barbecued steaks tofu salads.

This research should be taken seriously, as it is by a crack team of experts across a number of disciplines and Universities. Said, Steven J Davis of University of California at Irvine,

The world is facing many life-threatening impacts of climate change, so people having to spend a bit more to drink beer may seem trivial by comparison. But … not having a cool pint at the end of an increasingly common hot day just adds insult to injury.

Liking the odd beer or three I am really concerned about this prospect, so I rented the paper for 48 hours to check it out. What a sensation it is. Here a few impressions.

Layers of Models

From the Introduction, there were a series of models used.

- Created an extreme events severity index for barley based on extremes in historical data for 1981-2010.

- Plugged this into five different Earth Systems models for the period 2010-2099. Use this against different RCP scenarios, the most extreme of which shows over 5 times the warming of the 1981-2010 period. What is more severe climate events are a non-linear function of temperature rise.

- Then model the impact of these severe weather events on crop yields in 34 World Regions using a “process-based crop model”.

- “(W)e examine the effects of the resulting barley supply shocks on the supply and price of beer in each region using a global general equilibrium model (Global Trade Analysis Project model, GTAP).”

- “Finally, we compare the impacts of extreme events with the impact of changes in mean climate and test the sensitivity of our results to key sources of uncertainty, including extreme events of different severities, technology and parameter settings in the economic model.”

What I found odd was they made no allowance for increasing demand for beer over a 90 year period, despite mentioning in the second sentence that

(G)lobal demand for resource-intensive animal products (meat and dairy) processed foods and alcoholic beverages will continue to grow with rising incomes.

Extreme events – severity and frequency

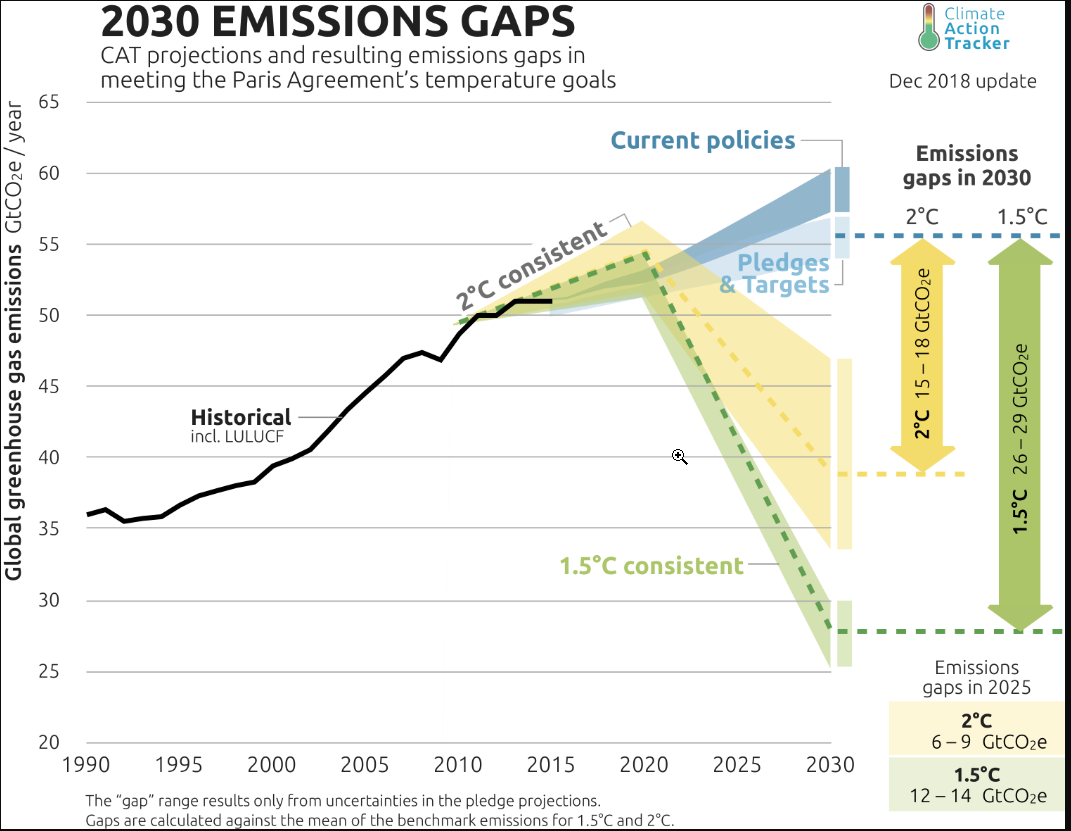

As stated in point 2, the paper uses different RCP scenarios. These featured prominently in the IPCC AR5 of 2013 and 2014. They go from RCP2.6, which is the most aggressive mitigation scenario, through to RCP 8.5 the non-policy scenario which projected around 4.5C of warming from 1850-1870 through to 2100, or about 3.8C of warming from 2010 to 2090.

Figure 1 has two charts. On the left it shows that extreme events will increase intensity with temperature. RCP2.6 will do very little, but RCP8.5 would result by the end of the century with events 6 times as intense today. Problem is that for up to 1.5C there appears to be no noticeable change what so ever. That is about the same amount of warming the world has experienced from 1850-2010 per HADCRUT4 there will be no change. Beyond that things take off. How the models empirically project well beyond known experience for a completely different scenario defeats me. It could be largely based on their modelling assumptions, which is in turn strongly tainted by their beliefs in CAGW. There is no reality check that it is the models that their models are not falling apart, or reliant on arbitrary non-linear parameters.

The right hand chart shows that extreme events are porjected to increase in frequency as well. Under RCP 2.6 ~ 4% chance of an extreme event, rising to ~ 31% under RCP 8.5. Again, there is an issue of projecting well beyond any known range.

Fig 2 average barley yield shocks during extreme events

The paper assumes that the current geographical distribution and area of barley cultivation is maintained. They have modelled in 2099, from the 1981-2010 a gridded average yield change with 0.5O x 0.5O resolution to create four colorful world maps representing each of the four RCP emissions scenarios. At the equator, each grid is about 56 x 56 km for an area of 3100 km2, or 1200 square miles. Of course, nearer the poles the area diminishes significantly. This is quite a fine level of detail for projections based on 30 years of data to radically different circumstances 90 years in the future. The results show. Map a) is for RCP 8.5. On average yields are projected to be 17% down. As Paul Homewood showed in a post on the 17th, this projected yield fall should be put in the context of a doubling of yields per hectare since the 1960s.

This increase in productivity has often solely ascribed to the improvements in seed varieties (see Norman Borlaug), mechanization and use of fertilizers. These have undoubtably have had a large parts to play in this productivity improvement. But also important is that agriculture has become more intensive. Forty years ago it was clear that there was a distinction between the intensive farming of Western Europe and the extensive farming of the North American prairies and the Russian steppes. It was not due to better soils or climate in Western Europe. This difference can be staggering. In the Soviet Union about 30% of agricultural output came from around 1% of the available land. These were the plots that workers on the state and collective farms could produce their own food and sell surplus in the local markets.

Looking at chart a in Figure 2, there are wide variations about this average global decrease of 17%.

In North America Montana and North Dakota have areas where barley shocks during extreme years will lead to mean yield changes over 90% higher normal, and the areas around have >50% higher than normal. But go less than 1000 km North into Canada to the Calgary/Saskatoon area and there are small decreases in yields.

In Eastern Bolivia – the part due North of Paraguay – there is the biggest patch of > 50% reductions in the world. Yet 500-1000 km away there is a North-South strip (probably just 56km wide) with less than a 5% change.

There is a similar picture in Russia. On the Kazakhstani border, there are areas of > 50% increases, but in a thinly populated band further North and West, going from around Kirov to Southern Finland is where there are massive decreases in yields.

Why, over the course of decades, would those with increasing yields not increase output, and those with decreasing yields not switch to something else defeats me. After all, if overall yields are decreasing due to frequent extreme weather events, the farmers would be losing money, and those farmers do well when overall yields are down will be making extraordinary profits.

A Weird Economic Assumption

Building up to looking at costs, their is a strange assumption.

(A)nalysing the relative changes in shares of barley use, we find that in most case barley-to-beer shares shrink more than barley-to-livestock shares, showing that food commodities (in this case, animals fed on barley) will be prioritized over luxuries such as beer during extreme events years.

My knowledge of farming and beer is limited, but I believe that cattle can be fed on other things than barley. For instance grass, silage, and sugar beet. Yet, beers require precise quantities of barley and hops of certain grades.

Further, cattle feed is a large part of the cost of a kilo of beef or a litre of milk. But it takes around 250-400g of malted barley to produce a litre of beer. The current wholesale price of malted barley is about £215 a tonne or 5.4 to 8.6p a litre. About cheapest 4% alcohol lager I can find in a local supermarket is £3.29 for 10 x 250ml bottles, or £1.32 a litre. Take off 20% VAT and excise duty leaves 30p a litre for raw materials, manufacturing costs, packaging, manufacturer’s margin, transportation, supermarket’s overhead and supermarket’s margin. For comparison four pints (2.276 litres) of fresh milk costs £1.09 in the same supermarket, working out at 48p a litre. This carries no excise duty or VAT. It might have greater costs due to refrigeration, but I would suggest it costs more to produce, and that feed is far more than 5p a litre.

I know that for a reasonable 0.5 litre bottle of ale it is £1.29 to £1.80 a bottle in the supermarkets I shop in, but it is the cheapest that will likely suffer the biggest percentage rise from increase in raw material prices. Due to taxation and other costs, large changes in raw material prices will have very little impact on final retail costs. Even less so in pubs where a British pint (568ml) varies from the £4 to £7 a litre equivalent.

That is, the assumption is the opposite of what would happen in a free market. In the face of a shortage, farmers will substitute barley for other forms of cattle feed, whilst beer manufacturers will absorb the extra cost.

Disparity in Costs between Countries

The most bizarre claim in the article in contained in the central column of Figure 4, which looks at the projected increases in the cost of a 500 ml bottle of beer in US dollars. Chart h shows this for the most extreme RCP 8.5 model.

I was very surprised that a global general equilibrium model would come up with such huge disparities in costs after 90 years. After all, my understanding of these models used utility-maximizing consumers, profit-maximizing producers, perfect information and instantaneous adjustment. Clearly there is something very wrong with this model. So I decided to compare where I live in the UK with neighbouring Ireland.

In the UK and Ireland there are similar high taxes on beer, with Ireland being slightly more. Both countries have lots of branches of the massive discount chain. They also have some products on their website aldi.co.uk and aldi.ie. In Ireland a 500 ml can of Sainte Etienne Lager is €1.09 or €2.18 a litre or £1.92 a litre. In the UK it is £2.59 for 4 x 440ml cans or £1.59 a litre. The lager is about 21% more in Ireland. But the tax difference should only be about 15% on a 5% beer (Saint Etienne is 4.8%). Aldi are not making bigger profits in Ireland, they just may have higher costs in Ireland, or lesser margins on other items. It is also comparing a single can against a multipack. So pro-rata the £1.80 ($2.35) bottle of beer in the UK would be about $2.70 in Ireland. Under the RCP 8.5 scenario, the models predict the bottle of beer to rise by $1.90 in the UK and $4.84 in Ireland. Strip out the excise duty and VAT and the price differential goes from zero to $2.20.

Now suppose you were a small beer manufacturer in England, Wales or Scotland. If beer was selling for $2.20 more in Ireland than in the UK, would you not want to stick 20,000 bottles in a container and ship it to Dublin?

If the researchers really understood the global brewing industry, they would realize that there are major brands sold across the world. Many are brewed across in a number of countries to the same recipe. It is the barley that is shipped to the brewery, where equipment and techniques are identical with those in other parts of the world. This researchers seem to have failed to get away from their computer models to conduct field work in a few local bars.

What can be learnt from this?

When making projections well outside of any known range, the results must be sense-checked. Clearly, although the researchers have used an economic model they have not understood the basics of economics. People are not dumb automatons waiting for some official to tell them to change their patterns of behavior in response to changing circumstances. They notice changes in the world around them and respond to it. A few seize the opportunities presented and can become quite wealthy as a result. Farmers have been astute enough to note mounting losses and change how and what they produce. There is also competition from regions. For example, in the 1960s Brazil produced over half the world’s coffee. The major region for production in Brazil was centered around Londrina in the North-East of Parana state. Despite straddling the Tropic of Capricorn, every few years their would be a spring-time frost which would destroy most of the crop. By the 1990s most of the production had moved north to Minas Gerais, well out of the frost belt. The rich fertile soils around Londrina are now used for other crops, such as soya, cassava and mangoes. It was not out of human design that the movement occurred, but simply that the farmers in Minas Gerais could make bumper profits in the frost years.

The publication of this article shows a problem of peer review. Nature Plants is basically a biology journal. Reviewers are not likely to have specialist skills in climate models or economic theory, though those selected should have experience in agricultural models. If peer review is literally that, it will fail anyway in an inter-disciplinary subject, where the participants do not have a general grounding in all the disciplines. In this paper it is not just economics, but knowledge of product costing as well. It is academic superiors from the specialisms that are required for review, not inter-disciplinary peers.

Kevin Marshall