Last month marked the 30th anniversary of the James Hansen’s Congressional Testimony that kicked off the attempts to control greenhouse gas emissions. The testimony was clearly an attempt, by linking human greenhouse emissions to dangerous global warming, to influence public policy. Unlike previous attempts (such as by then Senator Al Gore), Hansen’s testimony was hugely successful. But do the scientific projections that underpinned the testimony hold up against the actual data? The key part of that testimony was a graph from the Hansen et al 1988* Global climate changes as forecast by Goddard Institute for Space Studies three-dimensional model, produced below.

Figure 1: Hansen et al 1988 – Figure 3(a) in the Congressional Testimony

Note the language of the title of the paper. This is a forecast of global average temperatures contingent upon certain assumptions. The ambiguous part is the assumptions.

The assumptions of Hansen et. al 1988

From the paper.

4. RADIATIVE FORCING IN SCENARIOS A, B AND C

4.1. Trace Gases

We define three trace gas scenarios to provide an indication of how the predicted climate trend depends upon trace gas growth rates. Scenarios A assumes that growth rates of trace gas emissions typical of the 1970s and 1980s will continue indefinitely; the assumed annual growth averages about 1.5% of current emissions, so the net greenhouse forcing increase exponentially. Scenario B has decreasing trace gas growth rates, such that the annual increase of the greenhouse climate forcing remains approximately constant at the present level. Scenario C drastically reduces trace gas growth between 1990 and 2000 such that the greenhouse climate forcing ceases to increase after 2000.

Scenario A is easy to replicate. Each year increase emissions by 1.5% on the previous year. Scenario B assumes that growth emissions are growing, and policy takes time to be enacted. To bring emissions down to the current level (in 1987 or 1988), reduction is required. Scenario C one presumes are such that trace gas levels are not increasing. As trace gas levels were increasing in 1988 and (from Scenario B) continuing emissions at the 1988 level would continue to increase atmospheric levels the levels of emissions would have been considerably lower than in 1988 by the year 2000. They might be above zero, as small amounts of emissions may not have an appreciable impact on atmospheric levels.

The graph formed Fig. 3. of James Hansen’s testimony to Congress. The caption to the graph repeats the assumptions.

Scenario A assumes continued growth rates of trace gas emissions typical of the past 20 years, i.e., about 1.5% yr-1 emission growth; scenario B has emission rates approximately fixed at current rates; scenario C drastically reduces traces gas emissions between 1990 and 2000.

This repeats the assumptions. Scenario B fixes annual emissions at the levels of the late 1980s, whilst scenario C sees drastic emission reductions.

James Hansen in his speech gave a more succinct description.

We have considered cases ranging from business as usual, which is scenario A, to draconian emission cuts, scenario C, which would totally eliminate net trace gas growth by year 2000.

Note that the resultant warming from fixing emissions at the current rate (Scenario B) is much closer in warming impacts to Scenario A (emissions growth of +1.5% year-on-year) than Scenario C that stops global warming. Yet Scenario B results from global policy being successfully implemented to stop the rise in global emissions.

Which Scenario most closely fits the Actual Data?

To understand which scenario most closely fits the data, we need to look at that trace gas emissions data. There are a number of sources, which give slightly different results. One source, and that which ought to be the most authoritative, is the IPCC Fifth Assessment Report WG3 Summary for Policy Makers graphic SPM.1 is reproduced in Figure 2.

Figure 2 : AR5 WG3 SPM.1 Total annual anthropogenic GHG emissions (GtCO2eq/yr) by groups of gases 1970-2010. FOLU is Forestry and Other Land Use.

Figure 2 : AR5 WG3 SPM.1 Total annual anthropogenic GHG emissions (GtCO2eq/yr) by groups of gases 1970-2010. FOLU is Forestry and Other Land Use.

Note that in Figure 2 the other greenhouse gases – F-Gases, N2O and CH4 – are expressed in CO2 equivalents. It is very easy to see which of the three scenarios fits. The historical data up until 1988 shows increasing emissions. After that data emissions have continued to increase. Indeed there is some acceleration, stated on the graph comparing 2000-2010 (+2.2%/yr) with 1970-2000 (+1.3%/yr) . In 2010 GHG emissions growth were not similar to those in the 1980s (about 35 GtCO2e) but much higher. By implication, Scenario C, which assumed draconian emissions cuts is the furthest away from the reality of what has happened. Before considering how closely Scenario A compares to temperature rise, the question is therefore how close actual emissions have increased compared to the +1.5%/yr in scenario A.

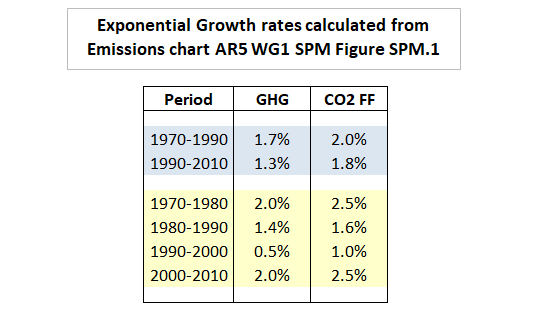

From my own rough calculations, total GHG emissions from 1990 to 2010 rose about 29% or 1.3% a year, compared to 41% or 1.7% a year in the period 1970 to 1990. Exponential growth of 1.3% is not far short of the 1.5%. The assumed 1.5% growth rates would have resulted in 2010 emissions of 51 GtCO2e instead of the 49 GtCO2e estimated, well within the margin of error. That is actual trends over 20 years were pretty much the business as usual scenario. The narrower CO2 emissions from fossil fuels and industrial sources from 1990 to 2010 rose about 42% or 1.8% a year, compared to 51% or 2.0% a year in the period 1970 to 1990, above the Scenario A.

The breakdown is shown in Figure 3.

Figure 3 : Rough calculations of exponential emissions growth rates from AR5 WG1 SPM Figure SPM.1

These figures are somewhat out of date. The UNEP Emissions Gap Report 2017 (pdf) estimated GHG emissions in 2016 at 51.9 GtCO2e. This represents a slowdown in emissions growth in recent years.

Figure 4 shows are the actual decadal exponential growth trends in estimated GHG emissions (with a linear trend to the 51.9 GtCO2e of emissions in 2016 from the UNEP Emissions Gap Report 2017 (pdf)) to my interpretations of the scenario assumptions. That is, from 1990 in Scenario A for 1.5% annual growth in emissions; in Scenario B for emissions to reduce from 38 to 35 GtCO2e in(level of 1987) in the 1990s and continue indefinitely: in Scenario C to reduce to 8 GtCO2e in the 1990s.

Figure 4 : Hansen et al 1988 emissions scenarios, starting in 1990, compared to actual trends from UNIPCC and UNEP data. Scenario A – 1.5% pa emissions growth; Scenario B – Linear decline in emissions from 38 GtCO2e in 1990 to 35 GtCO2e in 2000, constant thereafter; Scenario C – Linear decline in emissions from 38 GtCO2e in 1990 to 8 GtCO2e in 2000, constant thereafter.

This overstates the differences between A and B, as it is the cumulative emissions that matter. From my calculations, although in Scenario B 2010 emissions are 68% of Scenario A, cumulative emissions for period 1991-2010 are 80% of Scenario A.

Looking at cumulative emissions is consistent with the claims from the various UN bodies, that limiting to global temperature rise to 1.5°C or 2.0°C of warming relative to some point is contingent of a certain volume of emissions not been exceeded. One of the most recent the key graphic from the UNEP Emissions Gap Report 2017.

Figure 5 : Figure ES.2 from the UNEP Emissions Gap Report 2017, showing the projected emissions gap in 2030 relative to 1.5°C or 2.0°C warming targets.

Warming forecasts against “Actual” temperature variation

Hansen’s testimony was a clear case of political advocacy. By making Scenario B constant the authors are making a bold policy statement. That is, to stop catastrophic global warming (and thus prevent potentially catastrophic changes to climate systems) requires draconian reductions in emissions. Simply maintaining emissions at the levels of the mid-1980s will make little difference. That is due to the forcing being related to the cumulative quantity of emissions.

Given that the data is not in quite in line with scenario A, if the theory is correct, then I would expect:-

- Warming trend to be somewhere between Scenario A and Scenario B. Most people accept 4.2equilibrium climate sensitivity of the Hansen model was 4.2ºC for a doubling of CO2 was too high. The IPCC now uses 3ºC for ECS. More recent research has it much lower still. However, although the rate of the warming might be less, the pattern of warming over time should be similar.

- Average temperatures after 2010 to be significantly higher than in 1987.

- The rate of warming in the 1990s to be marginally lower than in the period 1970-1990, but still strongly positive.

- The rate of warming in the 2000s to be strongly positive marginally higher than in the 1990s.

From the model Scenario C, there seems to be about a five year lag in the model between changes in emission rates and changes in temperatures. However, looking at the actual temperature data there is quite a different warming pattern. Five years ago C3 Headlines had a post 2013: The NASA/Hansen Climate Model Prediction of Global Warming Vs. Climate Reality. The main graphic is in Figure 6

Figure 6 : C3 Headlines – NASA Hansen Prediction Vs Reality

The first thing to note is that the Scenario Assumptions are incorrect. Not only are they labelled as CO2, not GHG emissions, but are all stated wrongly. Stating them correctly would show a greater contradiction between forecasts and reality. However, the Scenario data appears to be reproduced correctly, and the actual graph appears to be in line with a graphic produced last month by Gavin Schmidt last month in his defense of Hansen’s predictions.

The data contradicts the forecasts. Although average temperatures are clearly higher than in in 1987, they are not in line with the forecast of Scenario A which is closest to the actual emissions trends. The rise is way below 70% of the model implied by inputting the lower IPCC climate sensitivity, and allowing for GHG emissions being fractional below the 1.5% per annum of Scenario A. But the biggest problem is where the main divergence occurred. Rather than warming accelerating slightly in the 2000s (after a possible slowdown in the 1990s), there was no slowdown in the 1990s, but it either collapsed to zero, or massively reduced, depending on the data set was used. This is in clear contradiction of the model. Unless there is an unambiguous and verifiable explanation (rather than a bunch of waffly and contradictory excuses ), the model should be deemed to be wrong. There could be natural and largely unknown natural factors or random data noise that could explain the discrepancy. But equally (and quite plausibly) those same factors could have contributed to the late twentieth century warming.

This simple comparison has an important implication for policy. As there is no clear evidence to link most of the observed warming to GHG emissions, by implication there is no clear support for the belief that reducing GHG emissions will constrain future warming. But reducing global GHG emissions is merely an aspiration. As the graphic in Figure 5 clearly demonstrates, over twenty months after the Paris Climate Agreement was signed there is still no prospect of aggregate GHG emissions falling through policy. Hansen et. al 1988 is therefore a double failure; both as a scientific forecast and a tool for policy advocacy in terms of reducing GHG emissions. If only the supporters would realize their failure, and the useless and costly climate policies could be dismantled.