In politics, most people’s views can be placed on a spectrum, when it comes to climate change / global warming there is no such perspectives. The views are often polarized, particularly by those who believe in a future climate catastrophe. This is an initial attempt at a grid aimed at clarifying the issues. Your constructive advice is sought on how this might be improved.

When there are contentious or politicized issues, a spectrum of opinions emerge where there is free discussion of ideas. This is true in politics and the Christian religion. In both, there is not just a one-dimensional spectrum of ideas, but multi-dimensional perspectives. For instance, in politics it has been argued that the left-right spectrum should be split into economic and moral issues. The United States Libertarian Party has had a simple survey running since 1995. A more comprehensive (but still American-orientated) survey is the Political Spectrum Quiz.

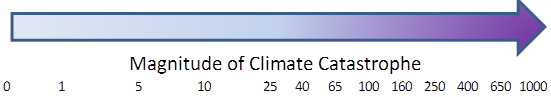

Another idea comes from Greg Craven, who did a series of zany You-Tube videos on Climate Change, particularly such as “The Most Terrifying Video You’ll Ever See” and “How it all ends“. He claimed that for the mass of non-scientists it was best to take a risk-based approach, grading the science on the credibility of those who made the claims. One objection with his analysis was it was based on polar extremes. That is either the worst climate catastrophe imaginable, or it is all a hoax. I proposed that there was a spectrum of possible outcomes, with the apocalyptic catastrophe at one extreme and the null outcome at the other. Basically there is a spectrum of views.

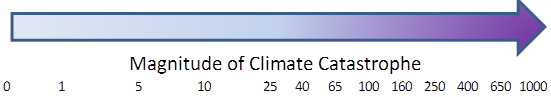

For this spectrum, the possible scenarios are from the null outcome on the left, rising to a huge climate catastrophe on the right.

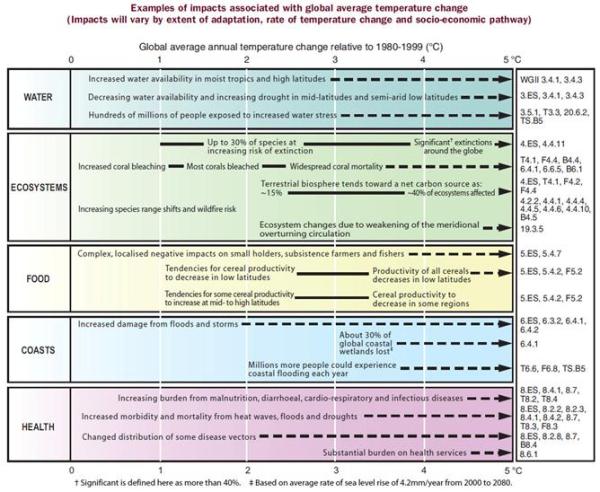

Craven’s argument was to consider either 0 or 1000, whereas I claimed that the UNIPCC scenarios (representing the “consensus” of climate scientists), allowed for a fair range of outcomes. I have provided a log scale, as this puts clear distance between someone who believes in a low risk of catastrophe of extreme catastrophe to someone who says there is no risk at all. For instance, if someone believes that there is a 1% chance of the worst case, a 9% chance of loss of 100 and a 90% chance of a loss of 10, then their score would be 0.01*1000 + 0.09*100 + 0.90*10 = 28. In other words, for that person, especially if they are risk averse, there is still a very significant issue that should justify serious consideration of some type of global policy action.

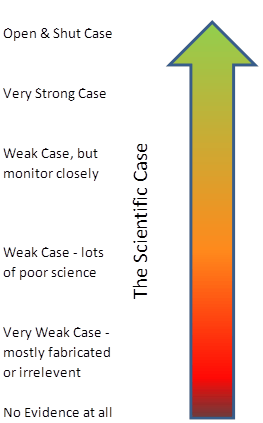

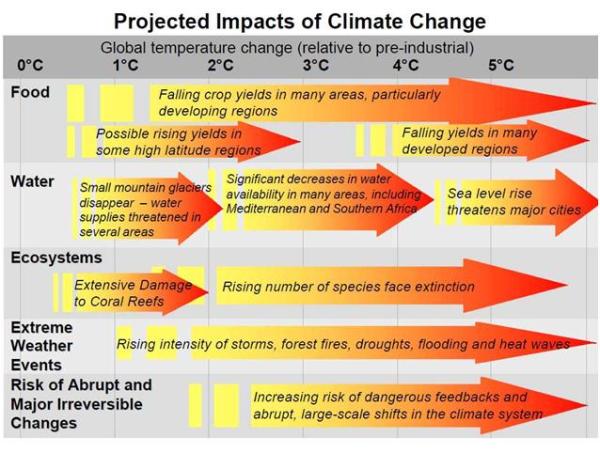

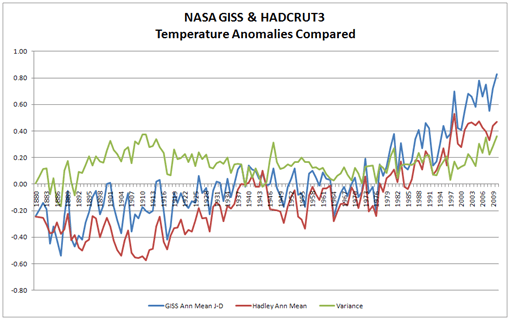

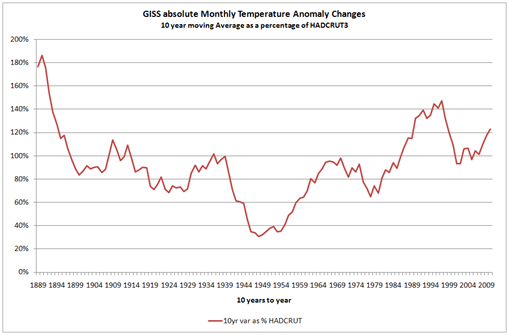

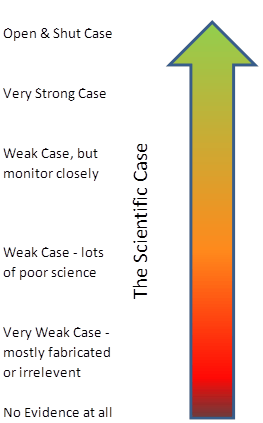

But this measure of the prospective level of climate catastrophe needs to be based upon something. That something is scientific evidence, not people’s intuitions or gut feelings. If we imagine that the uncertainties can be measured as risks (as neoclassical economists do) then then the worst case scenario can only be attained if there is near certain, unambiguous scientific evidence in support of that prediction. If the evidence is weak statistically, gives highly variables results depending on methodology or data sets, or only tangential to the prediction, then a lower risk weighting lower than 1 will need to be ascribed. For an overall picture, we need to ascribe a weighting to the body of evidence. I propose a traffic light system. In outline green is for an overwhelming body of evidence, red is for no proper evidence whatsoever, and amber is for some weak evidence. Something along the following lines:-

Basically, an unambiguous case for impending global catastrophe must have a substantial body of strong scientific evidence to substantiate that case, with little or no contrary evidence. I will develop on another day the analogy with evidence presented to a criminal court by the prosecution. However, for the present, an analogy that is relevant is that this conclusion is only reached once the evidence fails to fall over under independent cross-examination.

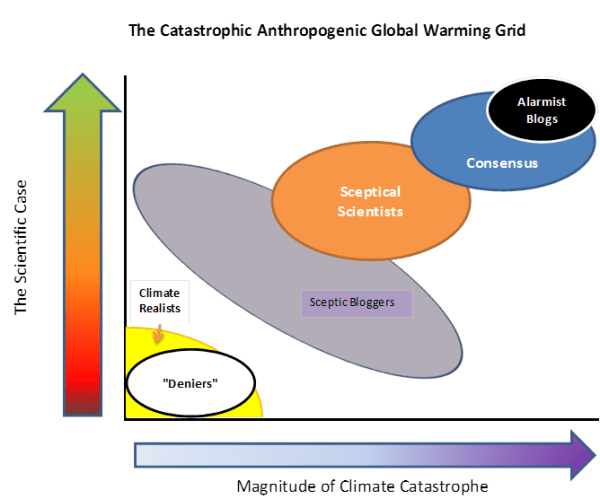

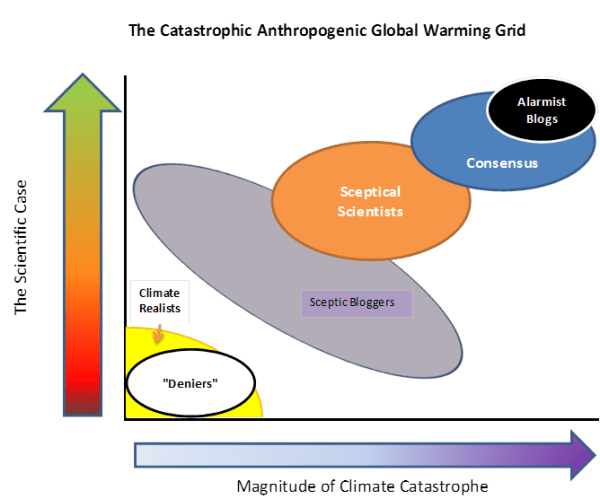

This gives us a grid with the magnitude of the climate catastrophe on the X axis, and the scientific case on the Y axis. The grid, with my first opinion of where people various groups are placed, is given below. I know it is controversial – the whole aim is to get people to start thinking constructively about their views.

Alarmist Blogs (for instance Skeptical Science and Desmogblog) have an extreme black-and-white one world where they are always right, and anyone who disagrees is the polar opposite . “Deniers” is a bogeyman construct of their making.

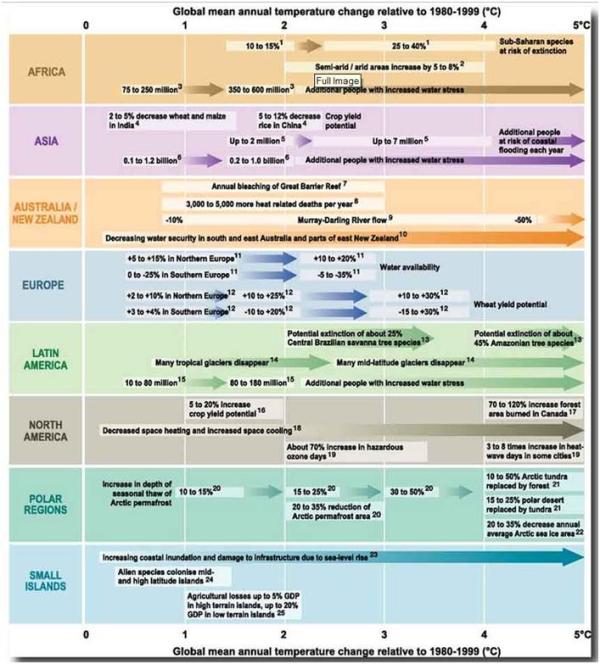

If one reads the detail of UNIPCC AR4 report, the “Consensus” of climate scientists allow for some uncertainties, and for scenarios which are not so catastrophic.

The more Sceptical Scientists, such as Richard Lindzen, Roger Pielke Snr and Roy Spencer, view increasing greenhouse gases as a serious issue for study. However, they view the evidence as being both much weaker than the “consensus” and pointing to a much less alarming future.

The most popular Sceptic Blogs, such as Wattsupwiththat, Climate Audit and Bishop Hill I characterise as having a position of “The stronger the evidence, the weaker the relevance“. That is they allow for a considerable spread of views, but neither dismiss rise in CO2 as of no consequence, nor claim that the available evidence is strong.

Finally, the Climate Realists such as Joanne Nova and the British Climate Realists website. They occupy a similar position as the “deniers”, but from a much more substantial position. They can see little or no evidence of catastrophe, but huge amounts of exaggeration dressed up as science.

What are your opinions? What position do you think you lie on the grid? Is there an alternative (and more informative) way of characterizing the different positions?