Updated 08/09/13 am

Updated 08/09/13 pm – M The Null Hypothesis

Climate Science is a hugely complex subject, dealing with phenomena that are essentially chaotic, with vague patterns. Yet the promotion of that science is banal and superficial. Below are some of the fundamentals that have been addressed in established areas like economics, philosophy and English Common Law, but which the Climate Science community ignores. Most overlap, or are different ways of looking at the same thing.

A Positive and Normative

I do not hold with the logical positivism in vogue in the early parts of the C20th and later underpinning the “positive economics” ideas of Milton Friedman that was popular in the 1950s to 1980s. But it made the useful distinction between positive statements (empirically based statements) and normative statements (what ought to be). The language of climate science is heavily value-laden. There is not attempt to distinguish positive from normative in language, nor highlight that competency in the sphere of positive statements is not necessarily an indication of competency in normative ones. For instance, when scientists make statements about the moral imperative for policy, they may overemphasize the moral questions raised as they may be too close to the subject. In fact believing that that rising greenhouse gas levels causes a worsening of climate can lead to a bias towards the simplified solution to constrain that growth. It takes understanding of the entirely separate fields of economics and public policy-making to determine whether this is achievable, or the best solution.

B Boundary conditions

There is no clear definition of science in general or the study of climate in particular. The only underlying definitions are tantamount to saying that science is what scientists do, and scientific statements are those made by scientists. Without a clear definition of science, scientists end up making unsupported statements, outside their area of competency. For instance, scientists often make statements about the economic case for policy. With the term “climate change” encompassing both, the general public are misled into believing that “climate scientists” cover both areas.

C Open and closed questions

A closed question can by answered by a single word. The narrowest closed questions are those can be answered “Yes/No” or “True/False”. Open questions need fuller answers. Climate change is not just about closed questions. It is about how much, how likely, when and where. If terms of boundary, there is not a closed question of science versus non-science – with the boundary in actual work being between that published in a peer-reviewed journal and that published outside. That leads onto non-triviality and quality conditions and relevancy

D Trivial v. Non-trivial

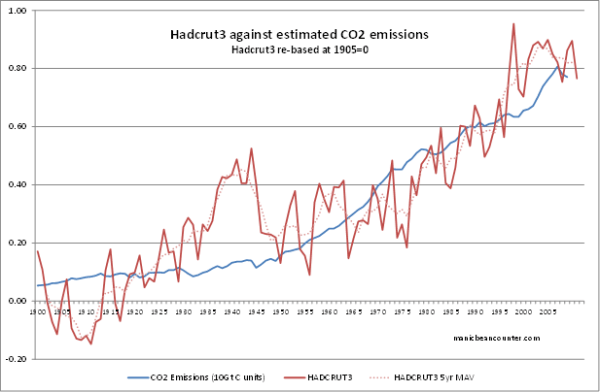

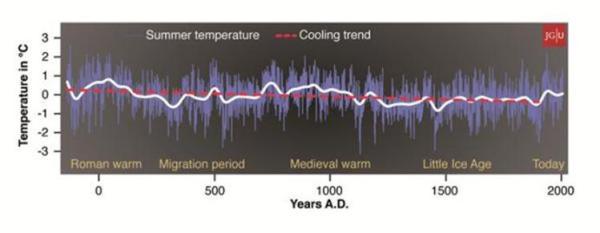

The strongest evidence for global warming suggests a trivial issue. In one aspect this is true by definition. The non-trivial part – the potential climate catastrophe that policy seeks to avert – relies upon future projections. This relies on temperature rises many times greater than so far experienced. Projections will always be, weaker that the actual evidence. But there is an empirical aspect as well. If the actual trends are far below those predicted (surface temperature warming trends), or fail to show a switch to a path pointing to catastrophe (acceleration in the rate of sea level rise)

E Quality

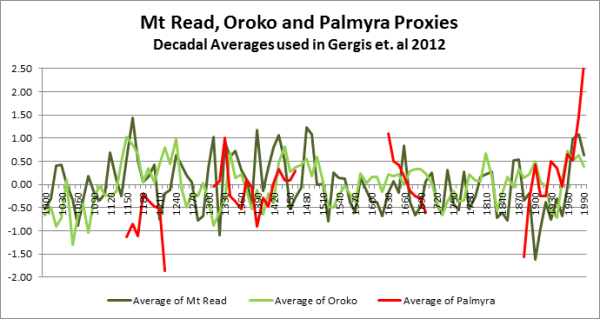

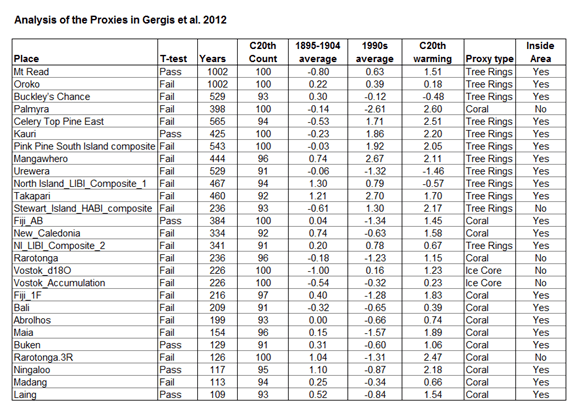

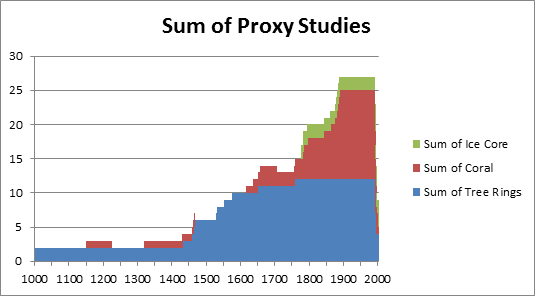

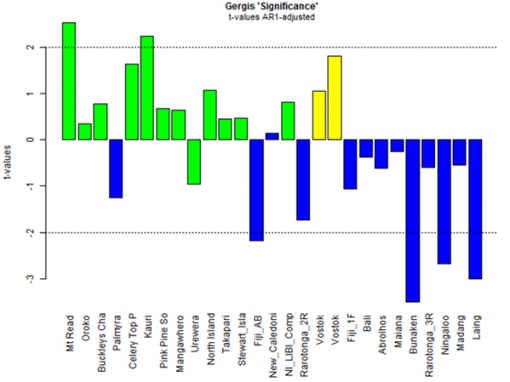

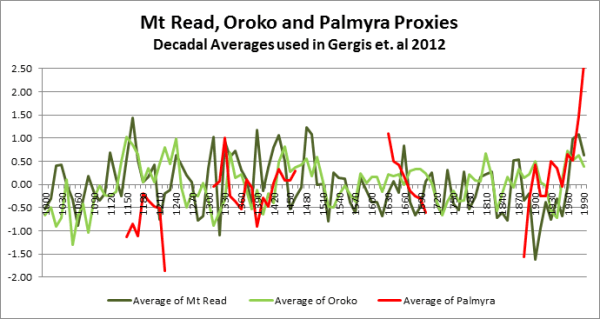

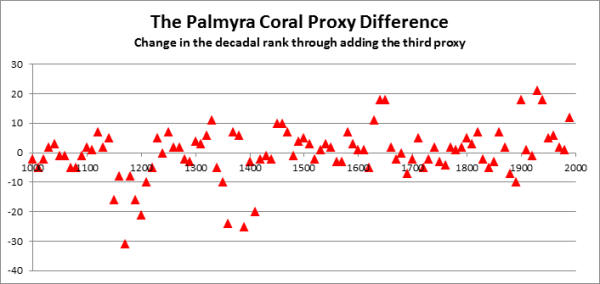

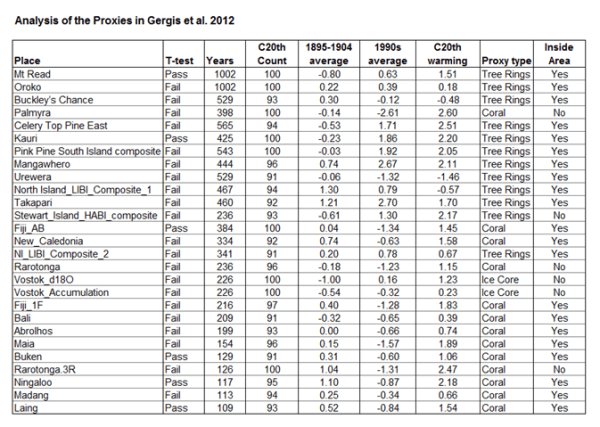

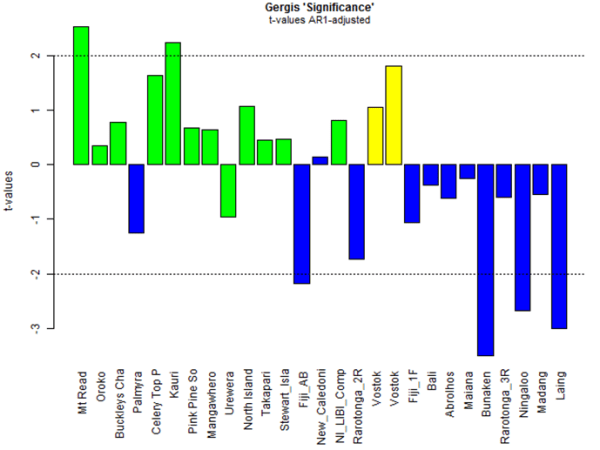

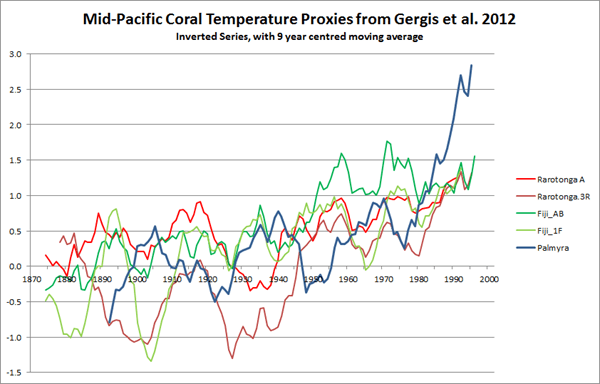

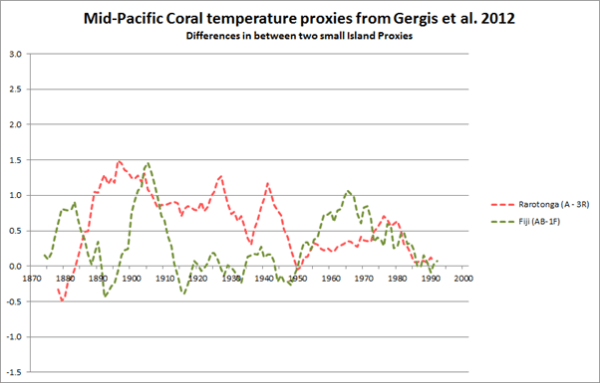

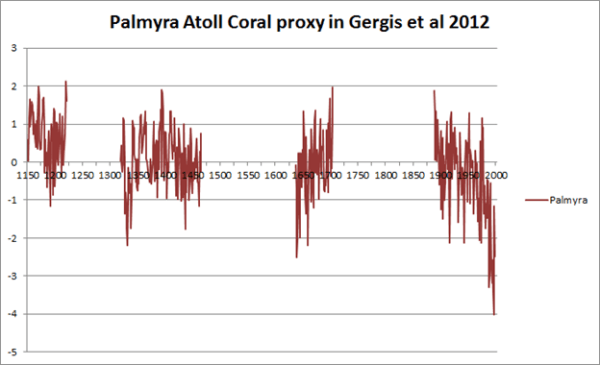

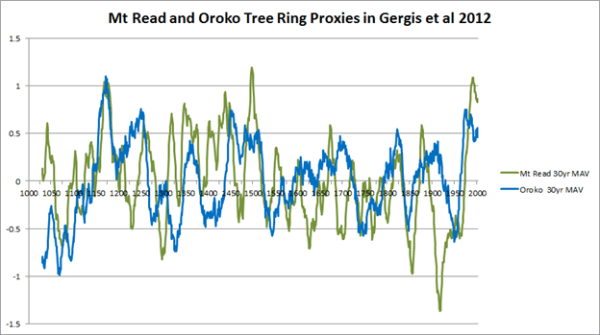

There is good quality science and poor quality. Peer review should help, but (as suggested in the Climategate emails) acceptance/rejection can be based on criteria other than science. In most areas of science, and indeed in many professions, efforts have been made to improve the quality of results. One minor step towards improvement of quality is the insistence on publishing the data behind peer-reviewed articles. This has led to the quick exposure of shoddy work like Gergis et al 2012 and LOG12 papers, whereas it took many years of persistence by Steve McIntyre to get the full data on Keith Briffa’s deeply flawed Yamal tree-ring temperature proxy. However, as the forthcoming UNIPCC AR5 report will demonstrate, increasing quality is sacrificed in promoting climate catastrophism.

F False Positives and False Negatives

A particular subset of the quality issue is that of false positives and false negatives. With activists pressuring governments and scientific bodies to agree with the dogma, and promotion of pejorative language (e.g. deniers, fake skeptics), misattribution of significant weather events to climate change is a consequence. Whilst in cancer screening there have been efforts made to reduce the number of false positives and false negatives, in climate science there seems to be every effort to increase the numbers of false positives. (Superstorm Sandy that hit New York state last year, the extreme heat wave in Europe in 2003, the low sea ice point in September 2012).

G Relevancy and significance

Some pieces of information, or scientific papers, are more important than others. The vast majority of papers published are on trivial issues and/or fail to make a lasting impact. In terms of catastrophic global warming, most papers in the field are tangential to the subject. The same is true of items of information, statistics and opinions.

H Necessary and Sufficient

For a climate policy to give net benefits, a number of conditions are necessary, both in the science (greenhouse gas effect, significant warming, adverse consequences) and in policy area (policy with theoretical net benefits > costs of doing nothing, large enough policy area, effective policy management). Sufficient for policy success (net policy benefits > costs of doing nothing) all are to some extent necessary. For policy failure, it is only sufficient for one of the necessary conditions to fail. It does not matter whether this is

– climate sensitivity being much lower than assumed

– or adaptation at the non-governmental local level is much more effective than assumed

– or the net adverse consequences of any given amount of warming are grossly exaggerated

– or the theoretical economic case for policy is flawed (such as demand for energy is far more inelastic with respect to price over time than assumed, or that renewable energy is not a close substitute to fossil fuel energy)

– or the actual policy enacted does not encapsulate the economic theory, diluting or nullifying the effectiveness

– or unilateralist policy where success requires that the vast majority of the biggest economies to participate

– or the policy on paper is potentially successful, but it is not project managed to drive through the maximum benefits at least cost

I Levels of evidence

In the legal systems, especially in criminal law, it has long been recognized that there are different qualities of evidence. The strongest is DNA, fingerprints, or catching somebody in the act. There is then secondary evidence from witnesses. There is then circumstantial evidence, such as the accused being near to the scene at the time, with no clear reason to be there. The lowest form of evidence, and usually rejected, is hearsay evidence. That is opinions of people with little interest in the case, giving unsupported opinions. The judicial process also views more highly evidence that is corroborated by other pieces of evidence, and evidence that on its own seems quite strong is downgraded or ruled out by contrary evidence, or alternative explanations.

J Values of the Legal Process in Reverse

Climate science, fails to grapple with the grading of evidence, as some its strongest arguments – consensus amongst scientists – is actually hearsay. Improving the quality of evidence would mean critically examining past forecasts in the light of evidence. In the judicial process, creating prejudice in the eyes of the jury against the defendants, or seeking to deny the accused a defence, is forcefully dealt with. Creating prejudice and denying a voice to those who question the climate change dogmas is viewed as part of the cause.

K Underdetermination Thesis

“The underdetermination thesis – the idea that any body of evidence can be explained by any number of mutually incompatible theories”

Quote from Kuhn vs Popper – Steve Fuller 2003

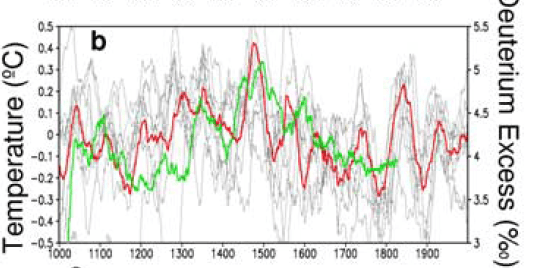

The global warming hypothesis is but one of a number of hypotheses trying to explain why climate changes over time. The problem is not just of a potential number of competing theories. It is that there might be a number of different elements influencing climate, with the various weightings dependent on the method and assumptions in analysis. It is not just trying to determine which one, but which ones and to what extent that they interplay.

L Vulnerability

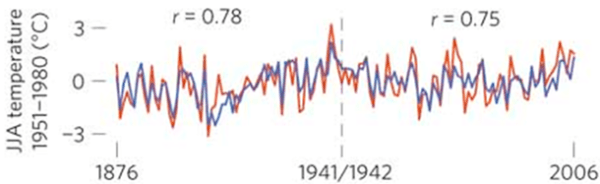

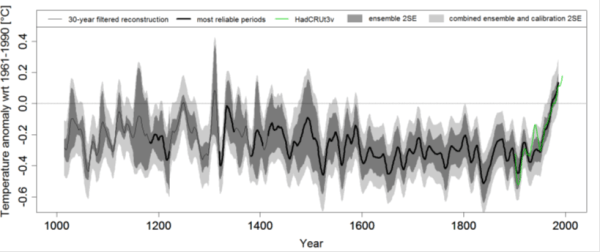

Every scientific hypothesis is vulnerable to being refuted. Human-caused catastrophic global warming (CAGW) is based on extremely tentative assumptions, and is a forecast of future events. As the warming the past one hundred years is tiny compared that forecast to happen in the future, and that warming is partly obscured by natural variations, then the signal of future catastrophe will be weak. The issue is further clouded by the lack of long periods of data on climate variability before when human emissions became significant. That is data prior to 1945, when the post war economic boom led to a huge increase in human emissions. Assuming the forecasts of CAGW are correct, the hypothesis becomes incredibly vulnerable to rejection.

But if CAGW is false, or massively exaggerated, then the hypothesis is deeply susceptible to confirmation bias by those who only look to find evidence of its truth. The core belief of climate science is that the catastrophist hypothesis is true and the job of the “science” is to reveal this truth. The core mission of many co-believers is to stop any questioning of these core beliefs. The alternative view is that evidence for CAGW has become stronger over the last twenty-five years, making the hypothesis less vulnerable over time. This can be tested by looking at the success of the short-term predictions.

M The Null Hypothesis

Wikipedia’s definition is

In statistical inference of observed data of a scientific experiment, the null hypothesis refers to a general or default position: that there is no relationship between two measured phenomena,…… Rejecting or disproving the null hypothesis – and thus concluding that there are grounds for believing that there is a relationship between two phenomena …………….. – is a central task in the modern practice of science, and gives a precise sense in which a claim is capable of being proven false.

It applies to AGW theory, as the hypotheses are empirical relationships. With highly complex, and essentially chaotic, systems it is only by confronting the data using a battery of statistical tests that you can disprove the null hypothesis. Without the null hypothesis, and without such rigorous testing, all the data and observations will only confirm what you want to believe. Some of the best established empirically-based hypotheses, like “HIV causes AIDS” and “long-term heavy smoking significantly reduces life expectancy” have been confronted with the null hypothesis many times against large, high quality data sets. At extremely high levels of significance, the null hypothesis of no relationship can be rejected.

It could be claimed that the null hypothesis in not applicable to AGW theory as it forecasts something much worse happening than has so far been experienced. However, it is more important because of this. There is no bridge between reality and the theoretical relationships (with assumed magnitudes) in the climate models. The null hypothesis (general or default position) for testing against actual data is not that there is no relationship, but the double-negative of no non-trivial relationship. So the null hypothesis for testing “CO2 causes warming”, is not “CO2 does not affect temperature”, but “CO2 has no non-trivial impact on warming”. The reason is that the claimed requirement for policy is avoidance of a climate catastrophe, with relationships being non-trivial in magnitude.