Tamino attempts a hatchet-job on a peer-reviewed paper on Australian Sea Levels. Whilst making some valid comments, it gives the misleading impression that he has overturned the main conclusion.

The sceptic blogs (GWPF, Wattsupwiththat, Jo Nova) are highlighting a front page article in the Australian about a peer-reviewed paper by P.J. Watson about Australian sea levels trends over the past century.

The major conclusion is that:-

“The analysis reveals a consistent trend of weak deceleration at each of these gauge sites throughout Australasia over the period from 1940 to 2000. Short period trends of acceleration in mean sea level after 1990 are evident at each site, although these are not abnormal or higher than other short-term rates measured throughout the historical record.”

The significance is that Watson shows a twentieth century rise of 17cm +/-5cm in Australia, whilst Government policy is based a sea level rise of up to 90cm by the end of the century. If there is deceleration from an already low base, then government action is no longer required, potentially saving billions of dollars.

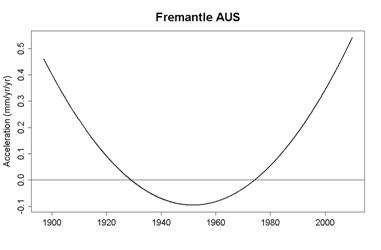

Looking for other viewpoints I found a direction from Real Climate to Tamino’s Open Mind blog. Given my last encounter when he tried to defend the deeply flawed Hockey Stick (see my comments here and here) I curious to know if this was another misdirection. I was not disappointed. Tamino manages to produce a graph showing the opposite to Watson. That is rapid acceleration, not gentle deceleration.

How does he end up with this contrary result? In Summary

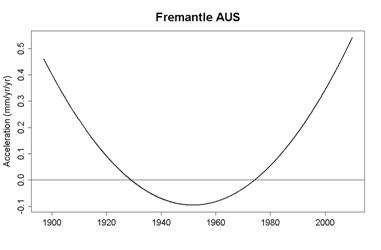

- Chooses just one of the four data sets used. That is the Freemantle data set.

- Making valid, but largely irrelevant criticisms, to undermine the scientific and statistical competency of the author.

- Takes time to make the point about treating 20 year moving averages as data for analysis purposes. The problem is that it underweights the data points at the beginning and the end. In particular, any recent acceleration will be understated.

- Criticizes the modelling method, with good reasons.

- Slips in an alternative model that may answer that criticism.

- Shows the results of that model output.

Tamino’s choice of the Freemantle data set should be justified, especially as Watson gives the comment in the conclusion.

“There is evidence of significant mine subsidence embedded in the historical tide gauge record for Newcastle and a likelihood of inferred subsidence within the later (after the mid 1990s) portion of the Fremantle record. In this respect, it is timely and necessary to augment these relative tide gauge measurements with CGPS to gain accurate data on the vertical movement (if any) at each gauge site to measure eustatic sea level rise. At present only the Auckland gauge is fitted with such precision levelling technology.”

That is, the Freemantle data shows the largest acceleration towards the end and this extra acceleration might be because land levels are falling, not sea levels rising.

The underweighting of recent data is important and could be dealt with by looking at shorter period moving averages and observing the acceleration rates. That is looking at moving averages for 19, 18, 17 years etc. If the acceleration rates cross the 20cm a century rate with the shortening of the time periods then this will undermine Watson’s conclusion. Tamino does not do this, despite being well within his capabilities. Until such an analysis is carried out, the claim abstract in the abstract that “(s)hort period trends of acceleration in mean sea level after 1990 … are not abnormal or higher than other short-term rates measured throughout the historical record ” is not undermined.

Instead of pursuing the point, Tamino then goes on to substitute Watson’s modelling method for an arbitrary one plucked from the air, with the comment

“Finally, we come to the other very big problem with this analysis: the model itself. Watson models his data as a quadratic function of time:

.

.

He then uses  (the 2nd time derivative of the model) as the estimated acceleration. But this model assumes that the acceleration is constant throughout the observed time span. That’s clearly not so. ”

(the 2nd time derivative of the model) as the estimated acceleration. But this model assumes that the acceleration is constant throughout the observed time span. That’s clearly not so. ”

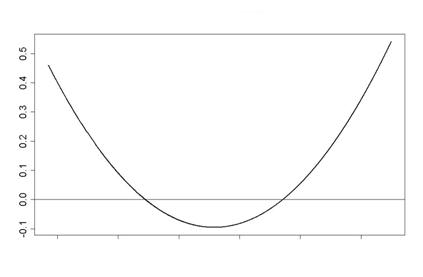

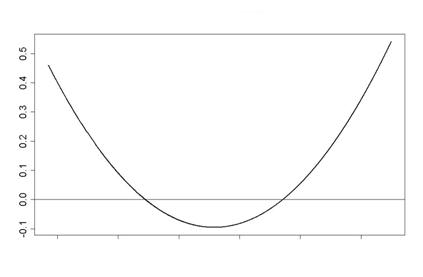

Instead he flippantly inserts a quartic equation, which gives the time-varying acceleration (the second derivation) as a quadratic function against time.

There are some problems with a quadratic functions as a model against time. Primarily it only has one turning point. Extend the graph far enough and it reaches infinity. So at some point in the future sea levels will reach the sun, and later the rate of rise will be faster than the speed of light. More seriously, if this quadratic is the closest fit to all the data series, it will either have, or soon will have, overstated the actual acceleration. If used to project 90 years or more ahead, it will provide a grossly exaggerated projection based on known data.

On this basis I have edited to give all the inferences that can be drawn from rising sea levels in Australasia.

That is, a pure maths exercise in plotting a quadratic equation on a graph, unrelated to any reality.

An alternative to this is to claim simply that there is not sufficient valid data, or the analysis is too poor draw any long-term inferences.

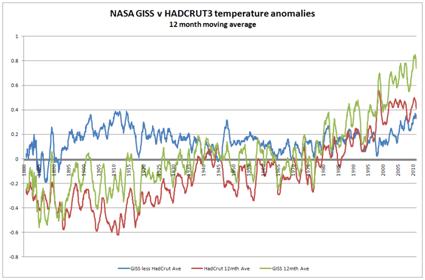

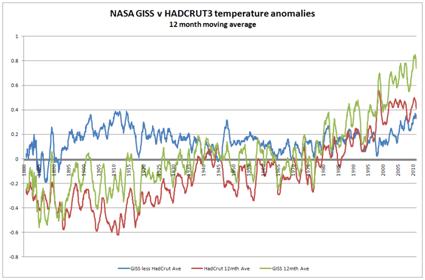

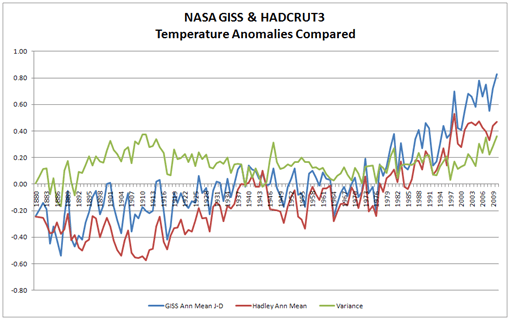

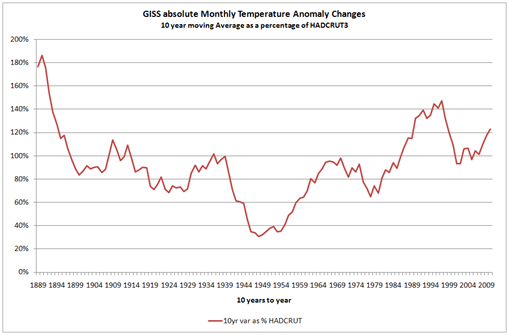

An alternative approach is to relate the sea level rises to the global temperature rises. Try comparing Watson’s graph of rate of change in sea levels to the two major temperature anomalies.

First it should be pointed out that Watson uses a twenty year moving average, so his data should lag the temperature data. The strong warming in the HADCRUT data in the 1920s to 1940s is replicated in Fort Denison and Auckland sea level data. The Lack of warming in the 1945 to 1975 period is replicated be marked deceleration in all four data sets from 1950 to the 1970s. The warming phase thereafter is similarly replicated in all four data sets. The current static phase, according to the more reliable HADCRUT data, should similarly be marked by a deceleration in sea level rise from an already low level. Further analysis of Watson’s data is needed to confirm this.

There is no reason in the existing data to believe that Watson’s conclusions are invalid. It is necessary to play fast and loose with the data and get lost in computer games models to draw alternative inferences. Yet if a member of the Australian Parliament says legislation to cope with sea level rise should be withdrawn due to a new study, the alarmist consensus, (who have just skimmed through Tamino’s debunking), will say that the study has been overturned. As a result, ordinary, coastal-dwelling people in Australia will continue to endure real hardship due to legislation based on alarmist exaggerations. (here & here).

(the 2nd time derivative of the model) as the estimated acceleration. But this model assumes that the acceleration is constant throughout the observed time span. That’s clearly not so. ”

(the 2nd time derivative of the model) as the estimated acceleration. But this model assumes that the acceleration is constant throughout the observed time span. That’s clearly not so. ”