At the Heretics Corner blog of Hengist McStone has a posting “Why can’t we have climate truthers and 911 skeptics?” My comment, which I am about to submit is:-

Your statement that

“running through the heart of climate skepticism is the belief that truth about climate science has been suppressed”

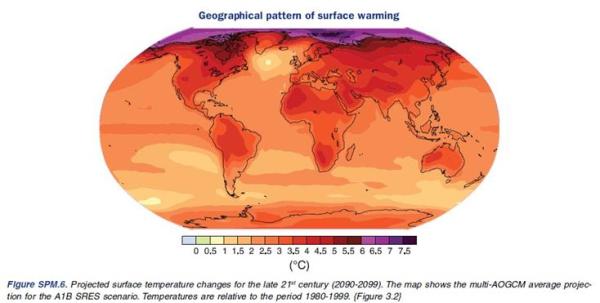

is a new one on me. Major climate sceptic blogs (WUWT, Jo Nova, BishopHill) do not see a hiding of the truth, but that a lot of spurious claims are based on very little evidence and of prophesies that fail to come true. They also point to other ways of looking at the data. They would agree that the public is being misled, but this is about the quality of the science, and ultimately the very definition of what is called “science”.

There is a huge weight of evidence for 911 being an act of al-Qaeda terrorism, with no assistance from the CIA. Similarly there is a huge weight of evidence for millions of Jews being killed in the Holocaust and that the average adult smoking 60 cigarettes a day from age 18 will live a much shorter and unhealthier life than the average adult who never inhales a single lung full.

Analogy with these different strongly-supported propositions can be in three areas. The first is on based on numbers of expert supporters of a proposition. The second is showing that there is similarly very strong evidence. The third is showing that techniques and standards of outside from other areas are utilized.

Use of the first area is attempting to gain credibility by association. The second area would make analogy and name-calling superfluous. The third area is contradicted by claims that only expert climate scientists can divine the real truth.

Perhaps another analogy would help. Suppose that a popular and charismatic celebrity is accused of rape of young children. Despite the overwhelming evidence showing that person’s guilt the accused vehemently denies the charges and many who idolise that person make all sorts of spurious claims about the evidence and the victims. What would be the best course of action?

- Dispense with a trial due to the overwhelming evidence, then deny a voice to those who not believing that their idol is guilty, question the evidence. Furthermore, mount a propaganda campaign against “evidence deniers” and “supporters of paedophilia”.

- Have a fair trial, even funding the defence, so that people can see the evidence being presented and challenged. If the evidence is overwhelming, the idolizers will be silenced.

I would suggest that the first course of action is taken by those who dogmatic belief in their being right is based upon very little evidence. Widely applied would undermine people’s faith in the ability of the court system to achieve justice, thereby undermining the rule of law. Widespread practice will result in highly repressive regimes, often with discrimination against sections of the community, in particular anyone who challenges orthodoxy. The second approach might sometimes result in the guilty getting found not guilty on a technicality, or getting found guilty of lesser crimes. But pursuit of the highest standards will win over the doubters and gain support for the rule of law. This is the thinking that led to the development of the trial by jury system in Anglo-Saxon England. If you give people a fair and open trial, then others will trust authority. If you let a ruler or appointed expert divine the truth, then, even when they consistently get decisions right there will be distrust. If they are perceived to get things wrong, or the process is hidden from public view, then distrust will emerge.

In a similar fashion, supporters of climatology are making a massive public relations blunder. Rather than engaging in open debate, and encouraging people to analyse the differing arguments they make false analogies, misrepresent the opponents and discourage people from questioning, or comparing differing points of view.