Jo Nova has an interesting and detailed post guest post by Bob Fernley-Jones on heavily homogenised rural sites in Australia by the Australian BOM.

I did a quick comment that was somewhat lacking in clarity. This post is to clarify my points.

In the post Bob Fernley-Jones stated

The focus of this study has been on rural stations having long records, mainly because the BoM homogenisation process has greatest relevance the older the data is.

Venema et al. 2012 stated (Italics mine)

The most commonly used method to detect and remove the effects of artificial changes is the relative homogenization approach, which assumes that nearby stations are exposed to almost the same climate signal and that thus the differences between nearby stations can be utilized to detect inhomogeneities (Conrad and Pollak, 1950). In relative homogeneity testing, a candidate time series is compared to multiple surrounding stations either in a pairwise fashion or to a single composite reference time series computed for multiple nearby stations.

This assumption of nearby temperature stations being exposed to same climate signal is standard practice. Victor Venema, (who has his own blog) is a leading academic expert on temperature homogenisation. However, there are extreme examples where this assumption does not hold. One example is at the end of the 1960s in much of Paraguay where average temperatures fell by one degree. As this was not replicated in the surrounding area both GISTEMP and Berkeley Earth homogenisations eliminated this anomaly. This was despite using very different homogenisation techniques. My analysis is here.

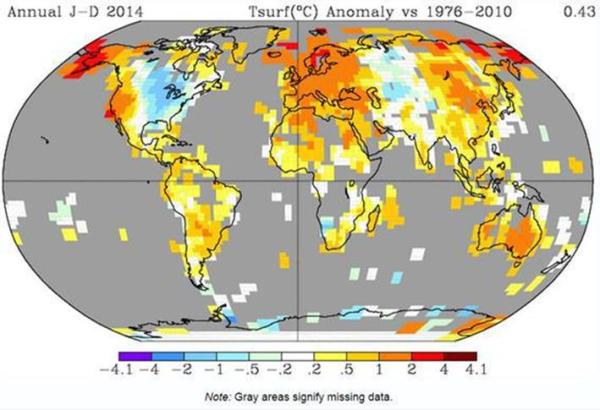

On a wider scale take a look at the GISTEMP land surface temperature anomaly map for 2014 against 1976-2010. (obtained from here)

Despite been homogenised and smoothed it is clear that trends are different. Over much of North America there was cooling, bucking the global trend. What this suggests to me is that the greater the distance between weather stations the greater the likelihood that the climate signals will be different. Most importantly for temperature anomaly calculations, over the twentieth century the number of weather stations increased dramatically. So it is more likely homogenisation will end up smoothing out local and sub-regional variations in temperature trends in the early twentieth century than in the later period. This is testable.

Why should this problem occur with expert scientists? Are they super beings who know the real temperature data, but have manufactured some falsehood? I think it is something much more prosaic. Those who work at the Australian BOM believe that the recent warming is human caused. In fact they believe that more than 100% of warming is human caused. When looking at outlier data records, or records that show inconsistencies there is a very human bias. Each time the data is reprocessed they find new inconsistencies, having previously corrected the data.

Kevin Marshall